zkash

@asyncakash

learning to make gpus go brrr | 🦀 | prev: @availproject, @puffer_finance, @class_lambda, topology | alum @iitroorkee

Potrebbero piacerti

first forgot to claim TIA, this time forgot to claim MON 😵 am i cooked chat 😭

Binance providing euthanasia

Billionaire glowups are way too predictable. I’m sure Vitalik is gonna surprise us in 10 years if not less 🔥

why is elon looking like his viral chinese parody guy

Let’s fucking goooooo! 3+ hours with the great rocket man @elonmusk open.spotify.com/episode/6vBr2k…

Open Sourcing Parallax: Your Sovereign AI OS. The easiest way to host AI applications that are entirely yours.

and still claude gives you uv pip

the chatgpt deep research task I requested last night is still running lol Seems like the scheduler kicked off my task before completion welp 🥲 @OpenAI compensate me with one free dr query now 😤

Some unstructured thoughts on what creates abundance mindset..

New post in the GPU 𝕻𝖊𝖗𝖋𝖔𝖗𝖒𝖆𝖓𝖈𝖊 Glossary on memory coalescing -- a hardware feature that CUDA programmers need to mind to get anywhere near full memory bandwidth utilization. The article includes a quick µ-benchmark, reproducible with Godbolt. What a tool!

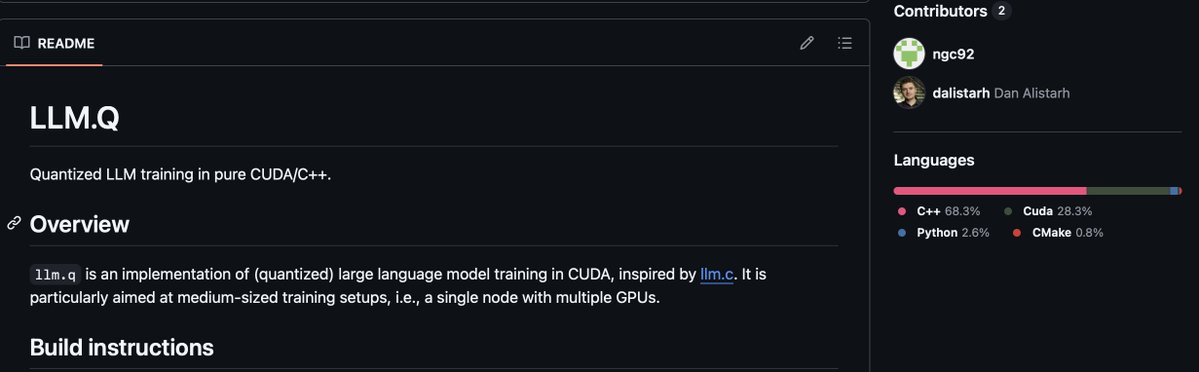

it's insane to me how little attention the llm.q repo has it's a fully C/C++/CUDA implementation of multi-gpu (zero + fsdp), quantized LLM training with support for selective AC it's genuinely the coolest OSS thing I've seen this year (what's crazier is 1 person wrote it!)

We reverse-engineered Flash Attention 4.

Really enjoyed @samsja19’s talk on the challenges of decentralized training (e.g. DiLoCo) under low-bandwidth conditions. Was surprised to learn how much weather can destabilize training 🤯 @PrimeIntellect is doing some wild stuff with decentralized RL! 🚀 Thanks for the…

too much new learning material! we're releasing a few chapters of hard study on post training AI models. it covers all major aspects plus more to come. - Evaluating Large Language models on benchmarks and custom use cases - Preference Alignment with DPO - Fine tuning Vision…

hi! if you’re interested in using or writing mega kernels for AI (one big GPU kernel for an entire model) you should tune in to today’s @GPU_MODE livestream today in ~3 hours we have the authors of MPK talking about their awesome new compiler for mega kernels! see you there :)

I was lucky to work in both China and the US LLM labs, and I've been thinking this for a while. The current values of pretraining are indeed different: US labs be like: - lots of GPUs and much larger flops run - Treating stabilities more seriously, and could not tolerate spikes…

I bet OpenAI/xAI is laughing so hard, this result is obvious tbh, they took a permanent architectural debuff in order to save on compute costs.

Qwen is basically the Samsung (smartphone) of llms. They ship nice new models everything month.

China saved opensource LLMs, some notable releases from July only > Kimi K2 > Qwen3 235B-A22B-2507 > Qwen3 Coder 480B-A35B > Qwen3 235B-A22B-Thinking-2507 > GLM-4.5 > GLM-4.5 Air > Qwen3 30B-A3B-2507 > Qwen3 30B-A3B-Thinking-2507 > Qwen3 Coder 30B-A3B US & EU need to do better

imagine trying to “learn to code” in cursor when the tab key is basically god mode 💀

We've trained a new Tab model that is now the default in Cursor. This model makes 21% fewer suggestions than the previous model while having a 28% higher accept rate for the suggestions it makes. Learn more about how we improved Tab with online RL.

ai bros really out here teaching each other how to draw assholes 😭

United States Tendenze

- 1. #FinallyOverIt 5,096 posts

- 2. #TalusLabs N/A

- 3. Summer Walker 16.3K posts

- 4. Justin Fields 9,943 posts

- 5. 5sos 21.3K posts

- 6. #criticalrolespoilers 4,011 posts

- 7. Jets 68.5K posts

- 8. Jalen Johnson 8,526 posts

- 9. Patriots 151K posts

- 10. Drake Maye 21K posts

- 11. Go Girl 25.4K posts

- 12. 1-800 Heartbreak 1,299 posts

- 13. Judge 202K posts

- 14. Wale 32.6K posts

- 15. #BlackOps7 15.7K posts

- 16. Robbed You 3,933 posts

- 17. #zzzSpecialProgram 2,533 posts

- 18. Disc 2 N/A

- 19. TreVeyon Henderson 12.9K posts

- 20. AD Mitchell 2,428 posts

Potrebbero piacerti

-

Marek Kaput

Marek Kaput

@jajakobyly -

pia

pia

@0xpiapark -

tupas.eth fred.stark

tupas.eth fred.stark

@fntupas -

Lev

Lev

@levaunhall -

sun

sun

@sunbh_eth -

kariy ⛩️

kariy ⛩️

@ammarif_ -

Tiago

Tiago

@0xtiagofneto -

CryptoNights

CryptoNights

@Islam98568047 -

pavvan | intern

pavvan | intern

@pavvannn -

Kodawari

Kodawari

@0xKodawari -

KSK

KSK

@ksk8176012 -

Bucur Paul Cosmin

Bucur Paul Cosmin

@bpol_tweet -

playerx

playerx

@ziblibito -

FreeBird

FreeBird

@FreeBird914845 -

julio

julio

@julio4__

Something went wrong.

Something went wrong.