“That’s one small [MASK] for [MASK], a giant [MASK] for mankind.” – [MASK] Armstrong Can autoregressive models predict the next [MASK]? It turns out yes, and quite easily… Introducing MARIA (Masked and Autoregressive Infilling Architecture) arxiv.org/abs/2502.06901

So excited to present Ctrl-G **Adaptable Logical Control for Large Language Models** TODAY at #NeurIPS2024 West Ballroom 4:30 - 7:30 pm. Ctrl-G is THE solution to LLM fill-in-the-middle generation, numerical planning and structured output. Stop by to discuss more!

Proposing Ctrl-G, a neurosymbolic framework that enables arbitrary LLMs to follow logical constraints (length control, infilling …) with 100% guarantees. Ctrl-G beats GPT4 on the task of text editing by >30% higher satisfaction rate in human eval. arxiv.org/abs/2406.13892

📢 I’m recruiting PhD students @CS_UVA for Fall 2025! 🎯 Neurosymbolic AI, probabilistic ML, trustworthiness, AI for science. See my website for more details: zzeng.me 📬 If you're interested, apply and mention my name in your application: engineering.virginia.edu/department/com…

🚨 Exciting Opportunity! 🚨 I’m looking for PhD students to join my team @ImperialEEE and @ImperialX_AI! 🌍🔍 Research Topic: Neuro-symbolic AI with a focus on making AI safer. 💡🤖 Full scholarships available! 🎓💰 Interested? Email me at: [email protected] [1/2]

Excited to share our work on LLM tokenization, led by the awesome @renatogeh. We find significant boosts in downstream performance, by probabilistically interpreting the space of tokenizations of a text. A bit of probabilistic reasoning goes a long way!

Where is the signal in LLM tokenization space? Does it only come from the canonical (default) tokenization? The answer is no! By looking at other ways to tokenize the same text, we get a consistent boost to LLM performance! arxiv.org/abs/2408.08541 1/5

Super cool work on discretizing probability distributions with *exponential* gains in succinctness! Recommended reading for probabilistic inference folks

Are you looking for an inference algorithm that supports your discrete-continuous probabilistic program? Look no further! We have developed a new probabilistic programming language (PPL) called HyBit that provides scalable support for discrete-continuous probabilistic programs.

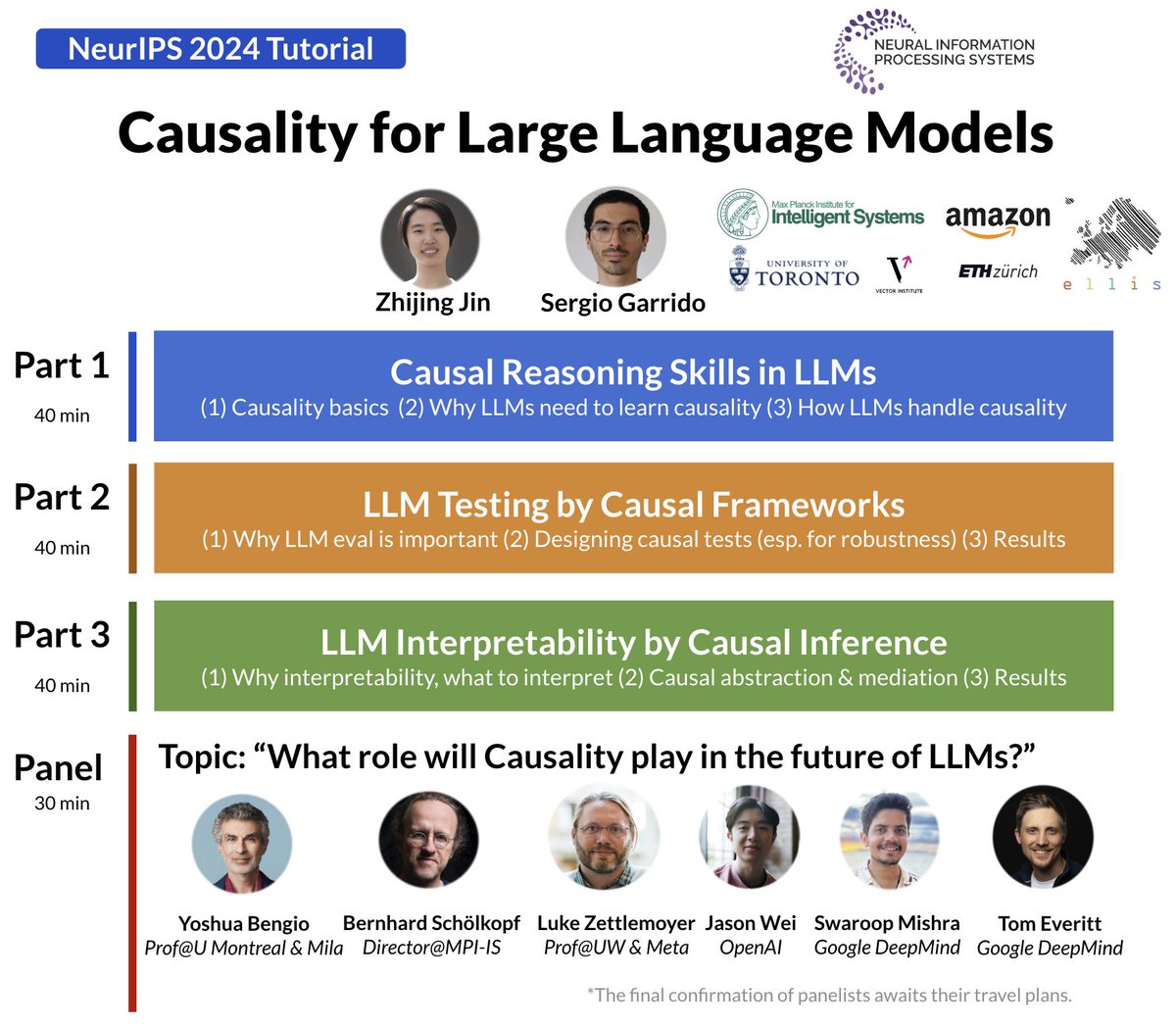

We will organize a "Causality for LLMs" Tutorial #NeurIPS2024 @NeurIPSConf. Happy to contribute to our community an intro of meaningful topics in Causal LLMs. And super excited for our panel w/ Yoshua, @bschoelkopf @LukeZettlemoyer @_jasonwei @Swarooprm7 @tom4everitt. Stay tuned!

United States 趨勢

- 1. Texas 155K posts

- 2. 3-8 Florida 2,113 posts

- 3. #JimmySeaFanconD1 266K posts

- 4. Austin Reaves 12.7K posts

- 5. #HookEm 10.6K posts

- 6. Sark 5,160 posts

- 7. Jeff Sims 1,684 posts

- 8. Aggies 9,363 posts

- 9. Arch 25.1K posts

- 10. #DonCheadleDay 1,221 posts

- 11. Georgia 49K posts

- 12. Arizona 32.3K posts

- 13. Life is 10% 2,601 posts

- 14. #LakeShow 3,690 posts

- 15. Marcel Reed 4,470 posts

- 16. Elko 3,054 posts

- 17. Katie Miller 2,569 posts

- 18. Sylus 95.9K posts

- 19. Banana Fish 9,606 posts

- 20. SEC Championship 5,329 posts

Something went wrong.

Something went wrong.