James Lucas

@james_r_lucas

Research Scientist @ NVIDIA Machine Learning PhD Candidate @ University of Toronto; Vector Institute

You might like

I'm super excited to share that I'll be joining @nvidia as a research scientist early next year! I feel very fortunate that I can continue to work with such an amazing team, led by @FidlerSanja. I'll be moving back to the UK (!) and I can't wait to start this next chapter.

Generating nice meshes in AI pipelines is hard. Our #SIGGRAPHAsia2024 paper proposes a new representation which guarantees manifold connectivity, and even supports polygonal meshes -- a big step for downstream editing and simulation. (1/N) SpaceMesh: research.nvidia.com/labs/toronto-a……

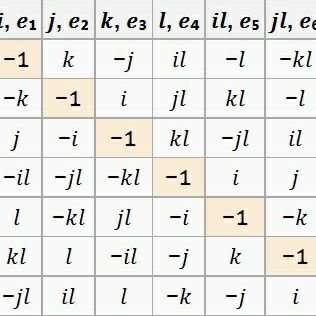

Our work builds closely on DyHPO (arxiv.org/abs/2202.09774 ) with Martin Witsuba, Arlind Kadra, and @josifgrabocka, as well as Permutation-invariant Graph Metanetworks (research.nvidia.com/labs/toronto-a…) with @dereklim_lzh, @HaggaiMaron, @MarcTLaw, myself and @james_r_lucas.

After a hiatus of three years, I'm back to writing blog posts on nice math that deserves more attention. This time, I'm going to write a two part blog post on a bit of a hot take: Everyone should learn optimal transport. mufan-li.github.io/OT1/

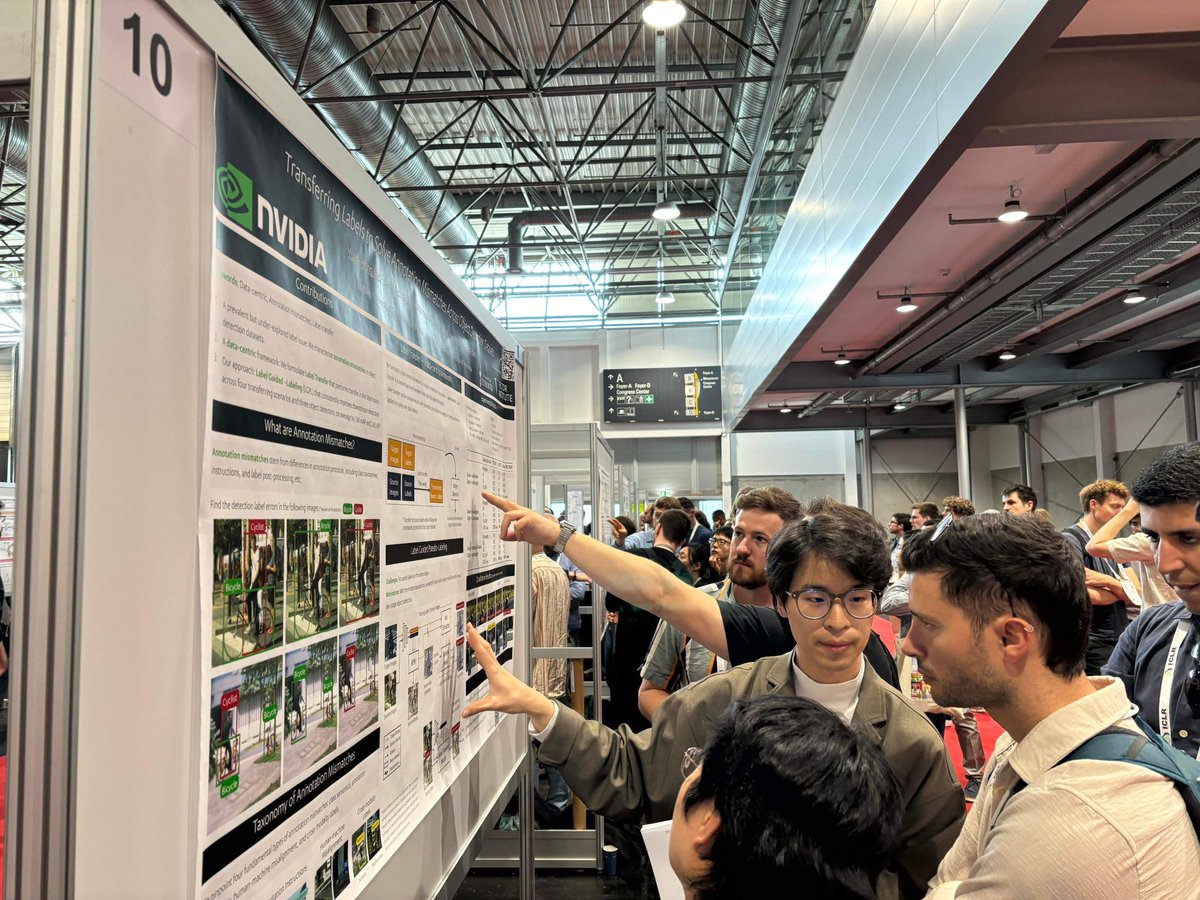

Appreciate those of you that stay till the last poster presentation and drop by our poster! Shout out to @james_r_lucas for the incredible supports and @shaohua0116 for the pic 👍

I will be presenting our ICLR paper this Friday (today) from 4:30 CEST at HallB #10 Tl;dr we identify a *real* and prevalent label issues in object detection datasets Let's chat about label errors, data centric solutions, and some of your real-world label issues!

Excited to release 🚀NeRF-XL🚀, a principled method to distribute your NeRF's parameters to multiple GPUs! research.nvidia.com/labs/toronto-a… It is heuristics-free and mathematically equiv. with training your NeRF on a single (large) GPU. Let's scale it up!🌎

New #NVIDIA ICLR spotlight paper: What if neural nets could process, analyze, interpret, and edit other neural nets weights? These models, termed metanetworks, are useful for various tasks. We develop new metanetworks for processing diverse input neural net architectures. 1/n

I am hiring a postdoc under joint supervision with Laleh Seyyed-Kalantari to work on understanding the internal representations of foundation models, with a specific focus on high-impact societal applications like medicine and law. Location is in Toronto or Waterloo. Inquire...

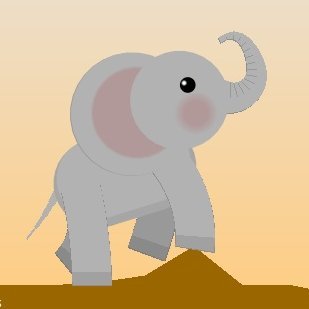

☕ The new LATTE3D model from #NVIDIAResearch turns text prompts into high-quality 3D representations of objects and animals within a second on a single RTX GPU. #GTC24 👀 nvda.ws/3TzYN3s

📢📢📢 Check out XCube, a generative model for very-high resolution 3D shapes and scenes! Project Page: tinyurl.com/ykex2wur Project Video: youtu.be/GX0lzwy8nUI Props to @xuanchi13 for leading this project!!!! [1/N]

🚀 Introducing our #SIGGRAPHAsia work “Adaptive Shells”, a novel #NeRF formulation that yields high visual fidelity and greatly accelerates rendering. TLDR: Auto-derived bounding shells result in up to 10x faster inference than InstantNGP! [1/n]

If you are in Paris, come check out our work on real-time text-to-3D generation at #ICCV2023 on Friday from 10:30 AM-12:30 PM in Room "Foyer Sud" - 035!

New #NVIDIA paper: Real-time text-to-3D generation #ICCV2023 3D generation from text requires expensive per-prompt optimization. We train 1 model on many prompts for real-time generalization to unseen prompts, interpolations and more! ATT3D details: research.nvidia.com/labs/toronto-a…

We'll be presenting our poster on Friday, 10:30am-12:30pm, #035 in Foyer Sud. Come say hi!

New #NVIDIA paper: Real-time text-to-3D generation #ICCV2023 3D generation from text requires expensive per-prompt optimization. We train 1 model on many prompts for real-time generalization to unseen prompts, interpolations and more! ATT3D details: research.nvidia.com/labs/toronto-a…

In high-stakes settings (e.g. AV), it is common practice to augment real training data with synthetic data (e.g. from a driving simulator). Naturally, we were surprised to not find principled algorithms to combine labeled sim and real data! Until now: tinyurl.com/42f327vw

How much data should you collect when starting a new ML project? What if there are multiple sources of data from different domains? We try to answer these questions in our #NeurIPS2022 paper: Optimizing Data Collection for Machine Learning. See the thread and webpage!

1/8) 87% of AI projects fail to hit production, 96% have data problems & 51% underestimate how much data they need. Our #NeurIPS2022 paper proposes learning & optimizing data collection! w/ @james_r_lucas @ALVAREZ_JOSEM @FidlerSanja @MarcTLaw Webpage: nv-tlabs.github.io/LearnOptimizeC…

Excited to share our #NeurIPS2022 @NVIDIAAI work GET3D, a generative model that directly produces explicit textured 3D meshes with complex topology, rich geometric details, and high fidelity textures. #3D Project page: nv-tlabs.github.io/GET3D/

📢[NOW LIVE] Toronto Annotation Suite (TORAS) is available to the public! TORAS is a web-based AI-powered data #annotation platform we have been developing at the @UofT for over 4 years. We hope that you find it useful in annotating your data! More info: aidemos.cs.toronto.edu/toras

🎙️Attention Neural Fields researchers!! This one is for you: today we're proud to officially release our research library #NVIDIAKaolinWisp. Wisp is packed with an interactive renderer and various building blocks for optimizing NeRF / SDF pipelines. Code: github.com/NVIDIAGameWork…

Diffusion Models are taking over Deep Generative Learning! Like to get started? 🔥 📢 We re-recorded our ~4 hour tutorial on Diffusion Models we presented at @CVPR this year (w/ @RuiqiGao & @ArashVahdat)! Youtube: youtube.com/watch?v=cS6JQp… Slides, etc.: …2-tutorial-diffusion-models.github.io

Reminder that the ICML Workshop on Distribution-Free Uncertainty Quantification starts TOMORROW! dfuq.rocks/22 We have 70 awesome papers on UQ for time-series, object detection, distribution shift, and more. All are welcome in Room 308!

🆕📜We study large language models’ ability to extrapolate to longer problems! 1) finetuning (with and without scratchpad) fails 2) few-shot scratchpad confers significant improvements 3) Many more findings (see the table & thread) Paper: [arxiv.org/abs/2207.04901] 1/

![cem__anil's tweet image. 🆕📜We study large language models’ ability to extrapolate to longer problems!

1) finetuning (with and without scratchpad) fails

2) few-shot scratchpad confers significant improvements

3) Many more findings (see the table & thread)

Paper: [arxiv.org/abs/2207.04901]

1/](https://pbs.twimg.com/media/FXbWZexXoAMQ6sf.jpg)

United States Trends

- 1. Michigan 105K posts

- 2. Ryan Day 4,264 posts

- 3. Bo Jackson 1,107 posts

- 4. Buckeyes 7,315 posts

- 5. #GoBlue 7,249 posts

- 6. #TheGame 3,123 posts

- 7. Barham 1,263 posts

- 8. Florida 106K posts

- 9. Texas 187K posts

- 10. Donaldson 1,483 posts

- 11. #SmallBusinessSaturday 2,876 posts

- 12. Malachi Toney N/A

- 13. Julian Sayin 1,307 posts

- 14. Kentucky 17.4K posts

- 15. Gus Johnson N/A

- 16. #GoBucks 4,727 posts

- 17. Ann Arbor 3,415 posts

- 18. Leeds 32.9K posts

- 19. Stoops 1,091 posts

- 20. Grade 3 3,247 posts

You might like

-

Corey Lynch

Corey Lynch

@coreylynch -

Mengye Ren

Mengye Ren

@mengyer -

Aditya Grover

Aditya Grover

@adityagrover_ -

Guodong Zhang

Guodong Zhang

@Guodzh -

Francesco Locatello

Francesco Locatello

@FrancescoLocat8 -

Ricky T. Q. Chen

Ricky T. Q. Chen

@RickyTQChen -

Jiaming Song

Jiaming Song

@baaadas -

Yuhuai (Tony) Wu

Yuhuai (Tony) Wu

@Yuhu_ai_ -

will grathwohl

will grathwohl

@wgrathwohl -

Rianne van den Berg

Rianne van den Berg

@vdbergrianne -

Harris Chan

Harris Chan

@SirrahChan -

Nan Rosemary Ke

Nan Rosemary Ke

@rosemary_ke -

Kevin Swersky

Kevin Swersky

@kswersk -

Jonathan Lorraine

Jonathan Lorraine

@jonLorraine9 -

Jiaxin Shi

Jiaxin Shi

@thjashin

Something went wrong.

Something went wrong.