#metatextgrad resultados de búsqueda

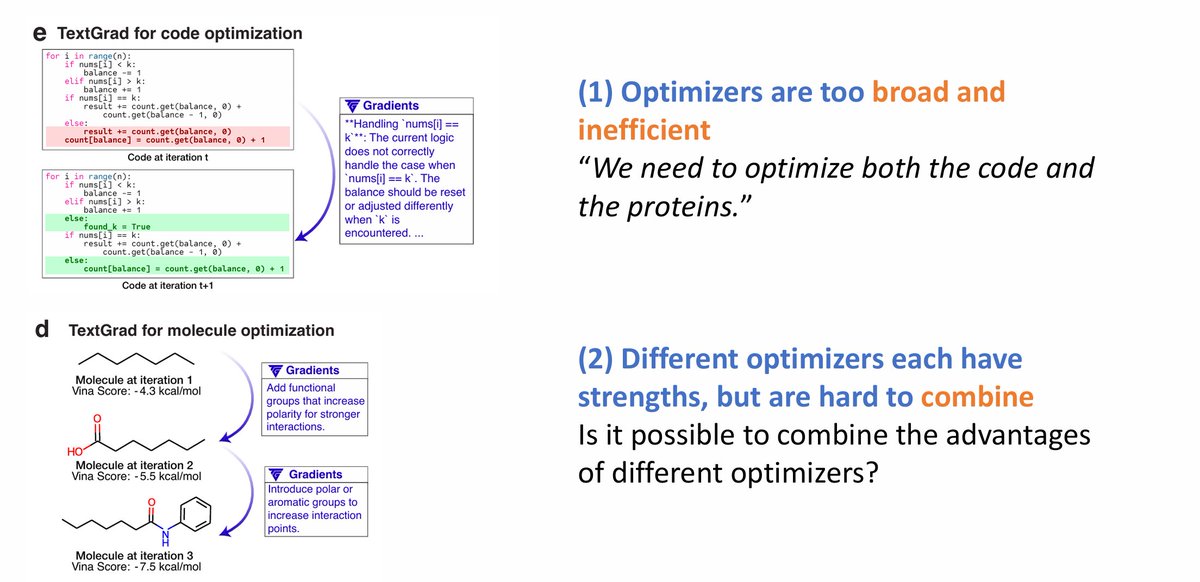

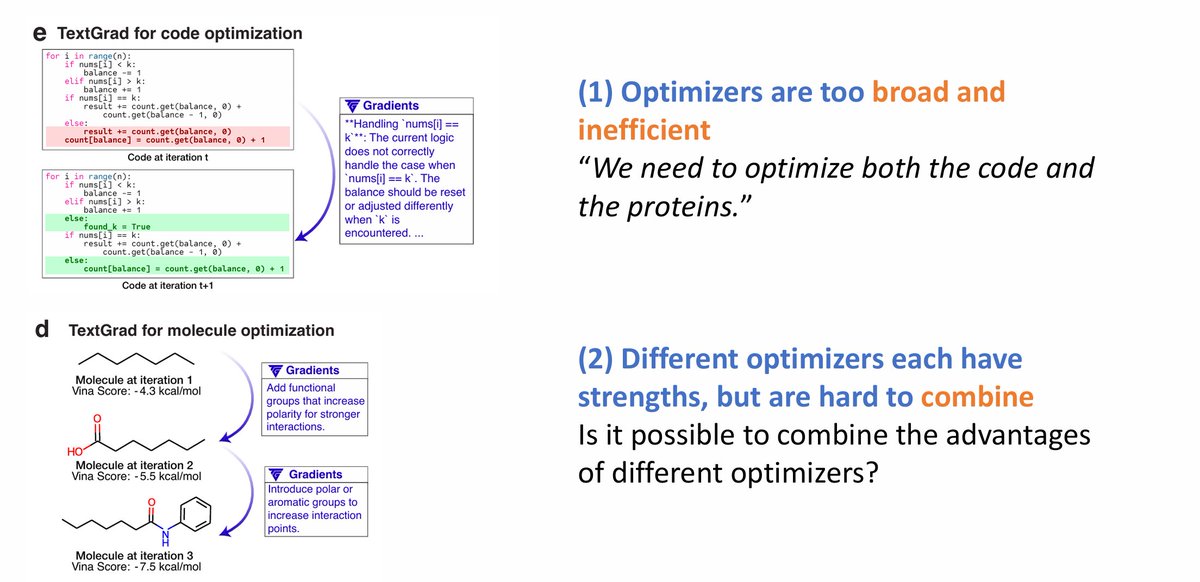

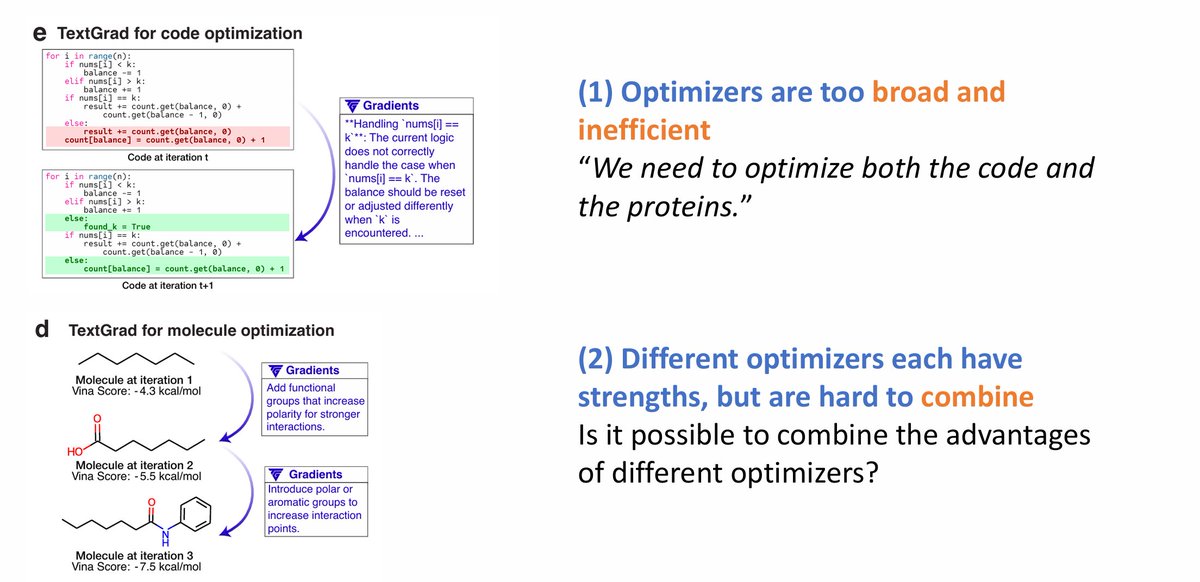

2/ Existing LM optimizers are broad and generic. #metaTextGrad automatically adapts them to specific tasks, greatly improving performance and efficiency. 📰 #NeurIPS2025 paper: openreview.net/pdf?id=10s01Yr… 🧑💻 Code: github.com/zou-group/meta… 📖 Slides: neurips.cc/media/neurips-…

Introducing #metaTextGrad🌟: a meta-optimization framework built on #TextGrad , designed to improve existing LLM optimizers by aligning them more closely with specific tasks. 📰 NeurIPS 2025 paper: openreview.net/pdf?id=10s01Yr… 🧑💻Code: github.com/zou-group/meta… 📚 Slides:…

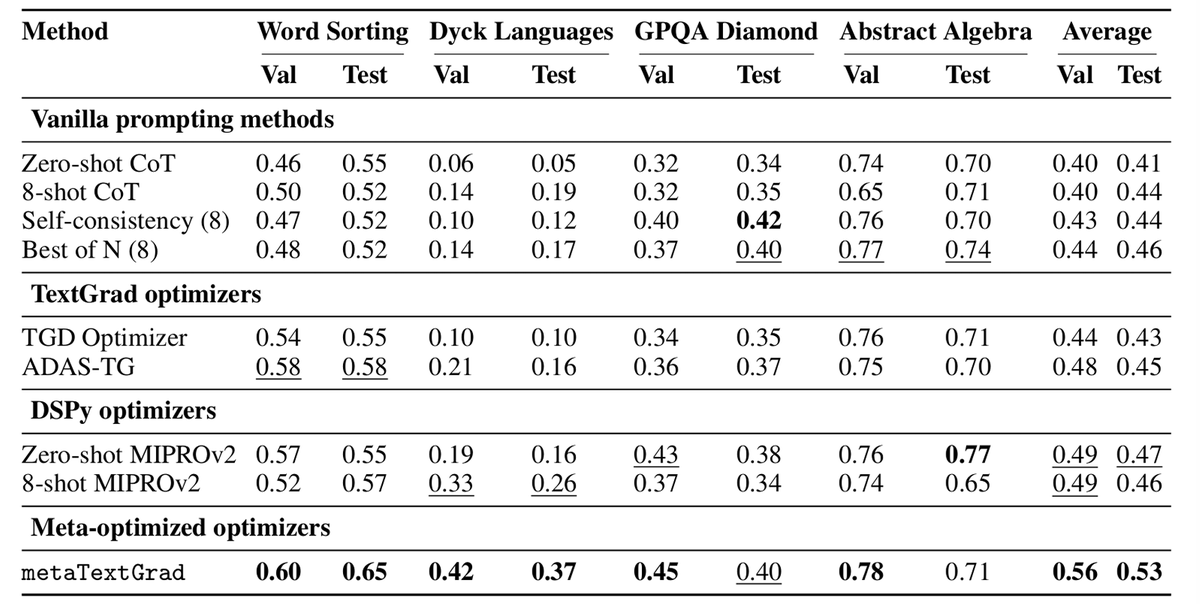

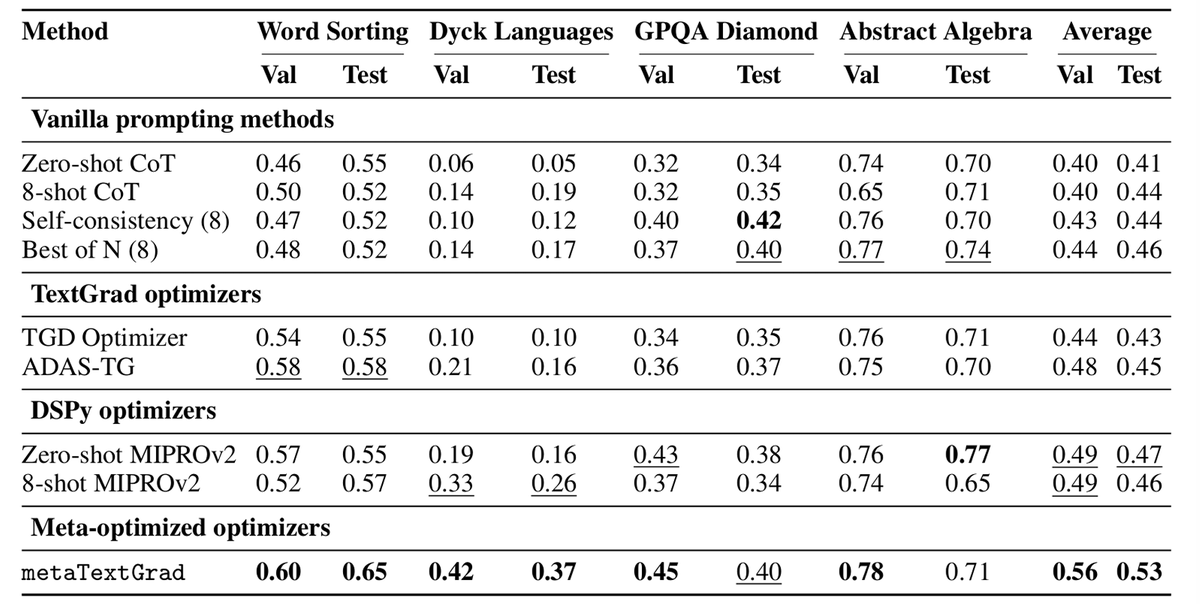

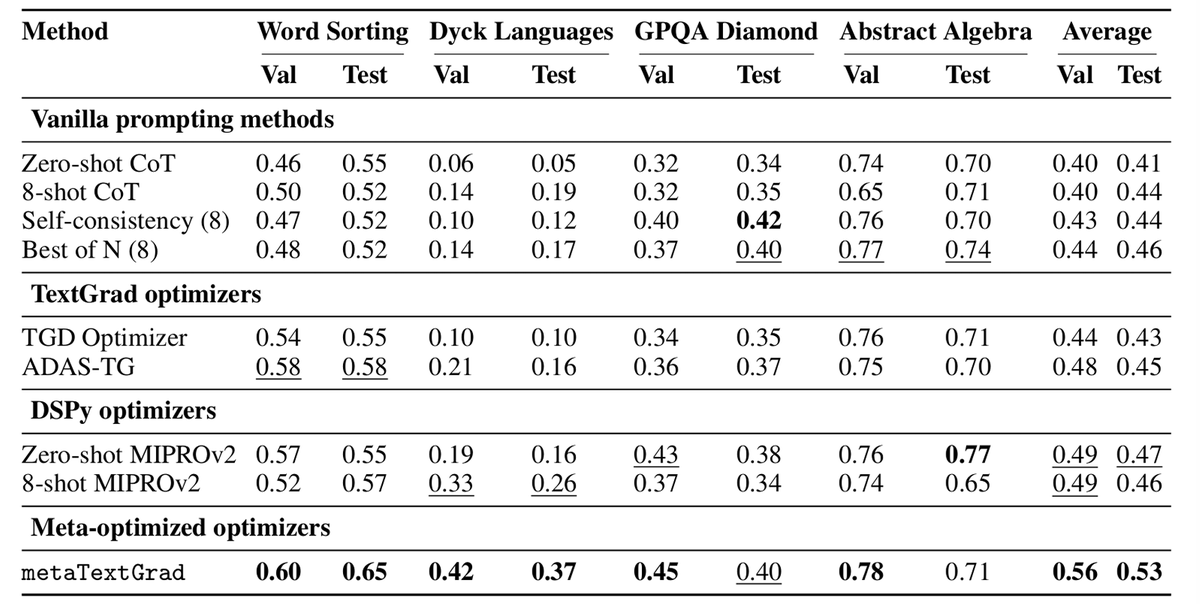

(6/8) Across various reasoning datasets, #metaTextGrad shows a marked improvement in performance over baselines.

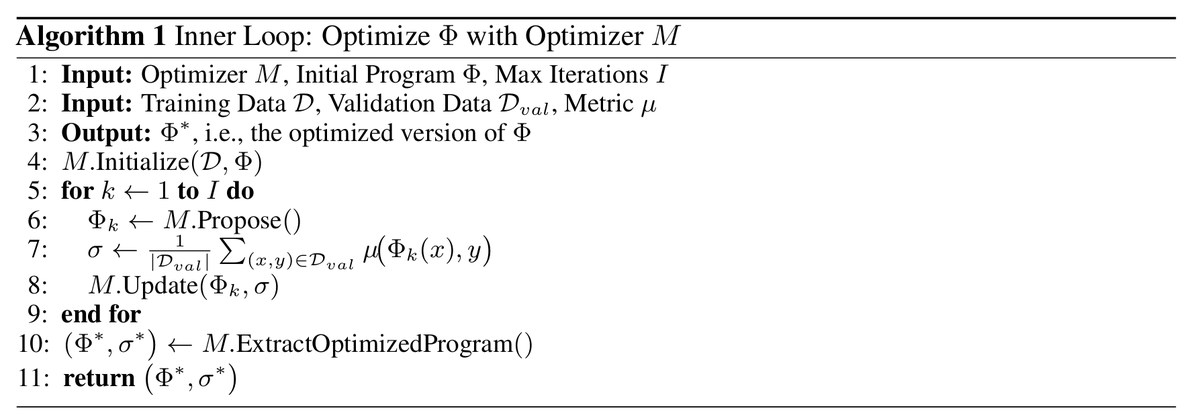

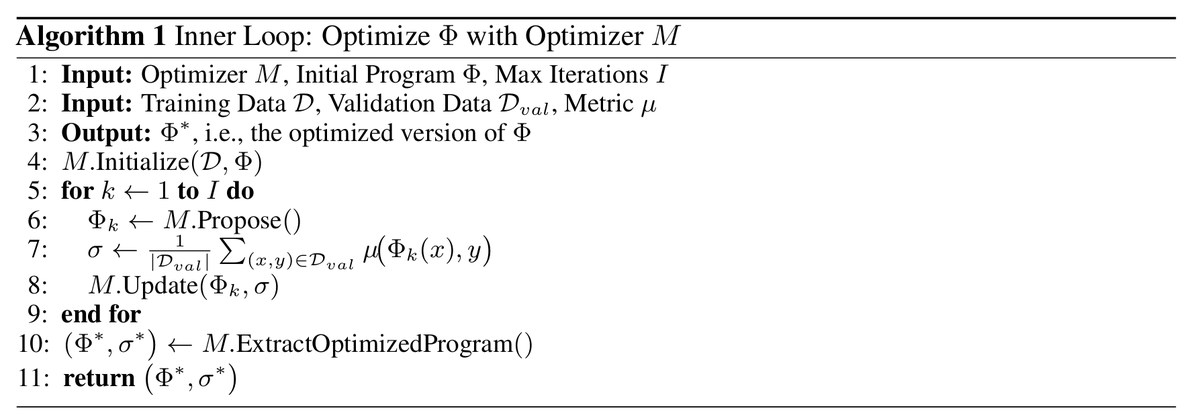

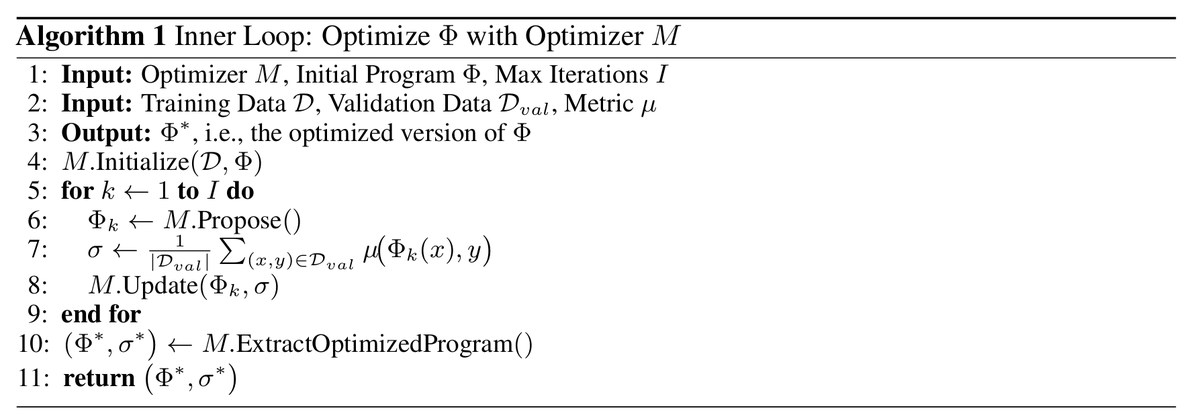

(3/8) The optimization in #metaTextGrad is divided into an inner loop and an outer loop. In the inner loop, an LLM optimizer optimizes programs, and its optimization results indicate the quality of the optimizer and how well it aligns with the task.

2/ Existing LM optimizers are broad and generic. #metaTextGrad automatically adapts them to specific tasks, greatly improving performance and efficiency. 📰 #NeurIPS2025 paper: openreview.net/pdf?id=10s01Yr… 🧑💻 Code: github.com/zou-group/meta… 📖 Slides: neurips.cc/media/neurips-…

(6/8) Across various reasoning datasets, #metaTextGrad shows a marked improvement in performance over baselines.

(3/8) The optimization in #metaTextGrad is divided into an inner loop and an outer loop. In the inner loop, an LLM optimizer optimizes programs, and its optimization results indicate the quality of the optimizer and how well it aligns with the task.

Introducing #metaTextGrad🌟: a meta-optimization framework built on #TextGrad , designed to improve existing LLM optimizers by aligning them more closely with specific tasks. 📰 NeurIPS 2025 paper: openreview.net/pdf?id=10s01Yr… 🧑💻Code: github.com/zou-group/meta… 📚 Slides:…

Introducing #metaTextGrad🌟: a meta-optimization framework built on #TextGrad , designed to improve existing LLM optimizers by aligning them more closely with specific tasks. 📰 NeurIPS 2025 paper: openreview.net/pdf?id=10s01Yr… 🧑💻Code: github.com/zou-group/meta… 📚 Slides:…

2/ Existing LM optimizers are broad and generic. #metaTextGrad automatically adapts them to specific tasks, greatly improving performance and efficiency. 📰 #NeurIPS2025 paper: openreview.net/pdf?id=10s01Yr… 🧑💻 Code: github.com/zou-group/meta… 📖 Slides: neurips.cc/media/neurips-…

(6/8) Across various reasoning datasets, #metaTextGrad shows a marked improvement in performance over baselines.

(3/8) The optimization in #metaTextGrad is divided into an inner loop and an outer loop. In the inner loop, an LLM optimizer optimizes programs, and its optimization results indicate the quality of the optimizer and how well it aligns with the task.

Something went wrong.

Something went wrong.

United States Trends

- 1. Michigan 105K posts

- 2. Jeremiah Smith 4,907 posts

- 3. Ryan Day 4,530 posts

- 4. Buckeyes 7,978 posts

- 5. #TheGame 3,345 posts

- 6. #GoBlue 7,568 posts

- 7. Bo Jackson 1,254 posts

- 8. Malachi Toney N/A

- 9. Stoops 1,817 posts

- 10. Barham 1,535 posts

- 11. Sayin 85.5K posts

- 12. Florida 102K posts

- 13. Texas 187K posts

- 14. Donaldson 1,608 posts

- 15. Touchback 4,385 posts

- 16. #OSUvsMICH N/A

- 17. #SmallBusinessSaturday 3,106 posts

- 18. #GoBucks 4,944 posts

- 19. Kentucky 17.4K posts

- 20. Gus Johnson N/A