MLPerf

@MLPerf

Building fair, useful, industry-standard benchmarks for ML training and inference performance of hardware, software, and services from TinyML to supercomputers

Vous pourriez aimer

We've just released @MLPerf Mobile version 5.0.2 on the Google Play Store and GitHub. This version adds support for devices based on the Samsung Exynos 2500 SoC. play.google.com/store/apps/det…

@MLCommons & @AVCConsortium announce @MLPerf Automotive v0.5 benchmark results—a major step for transparent, reproducible automotive AI performance data. mlcommons.org/2025/08/mlperf…

Guess who was named an AI inference leader in the latest @MLPerf results released by MLCommons?

On a personal level, I loved seeing .@MLPerf being mentioned so much! I can’t wait to see more submissions from across the industry as we push for the next 1000x in machine learning performance.

The Perf Storage Benchmark Suite will be a critical resource in improving machine learning and we’re honored to have participated alongside these industry leaders and academic organizations!

Inference keeps getting faster ... and more demanding. @MLCommons MLPerf Inference 3.1 adds large language model benchmarks for inference venturebeat.com/ai/mlperf-3-1-… via @VentureBeat "“We’re evolving the benchmark suite to reflect what’s going on,” @TheKanter said.

venturebeat.com

MLPerf 3.1 adds large language model benchmarks for inference

Vendor neutral, multi-stakeholder MLCommons, which allows orgs to report on AI performance, has released its second major update.

@MLCommons, we just released! New @MLPerf Inference and Storage results. Record participation in MLPerf Inference v3.1 and first-time MLPerf Storage v0.5 results highlight the growing importance of GenAI and storage. See all the results and learn more mlcommons.org/en/news/mlperf…

mlcommons.org

MLPerf Results Highlight Growing Importance of Generative AI and Storage - MLCommons

Latest benchmarks include LLM in inference and the first results for storage benchmark

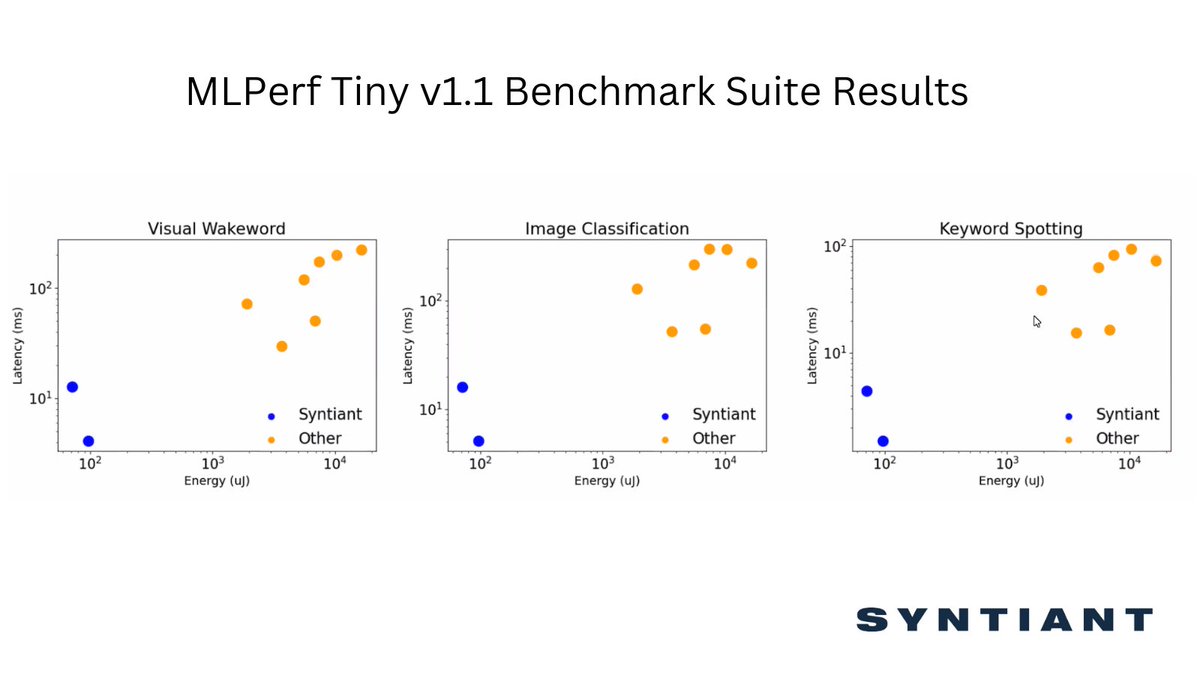

AI semiconductor company @Syntiantcorp announced its Syntiant Core 2™ programmable #deeplearning #architecture ranked as utilizing the lowest power #energy across three categories in @MLCommons @MLPerf Tiny v1.1 benchmark suite. Read more about energy performance below:

Great news! Our second-generation architecture achieved the lowest power energy performance across three categories in the most recent @MLCommons @MLPerf Tiny v1.1 benchmark suite. Click bit.ly/3PJGd8t to read the full results. #edgeAI #tinyML

If you are at the @databricks Data+AI Summit this week be sure to check out @MLCommons Exec Director @TheKanter's session - Advancing ML Through Benchmarks & Data, Thursday, June 29 at 2:30pm databricks.com/dataaisummit/s…

Calling all ISC attendees, stop by the @MLPerf roundtable 5/24 at 9am, Hall E - 2nd floor, to learn more about the MLPerf HPC benchmark suite V3.0 from the experts who created it. #mlcommons #MachineLearning #ISC23

Can you train a large language model? We’re adding GPT3 to MLPerf Training v3.0, so show us what you’ve got in Q2! Let the games begin 🥳🎉🎈🎊

Driving ML Forward in Automotive - David Kanter - 2023 CASPA Spring Symp... youtu.be/-TxJbUSv7EA via @YouTube

youtube.com

YouTube

Driving ML Forward in Automotive - David Kanter - 2023 CASPA Spring...

MLCommons: MLPerf Inference Delivers Power Efficiency and Performance Gains ow.ly/5vE250NBs04 #HPC @MLCommons @MLPerf

Learn about the business implications and check out the technical details of our @MLPerf submission in our blog. neuralmagic.com/blog/neural-ma…

.@MLPerf inference results are out! Two startups took on @nvidia and won (albeit for perf/Watt, and only in certain specific scenarios): Taiwan's Neuchips and Silicon Valley's SiMa. @Qualcomm also has some perf/Watt wins against H100 this time. #mlperf #ai eetimes.com/mlperf-inferen…

eetimes.com

MLPerf Inference: Startups Beat Nvidia on Power Efficiency - EE Times

How relevant are MLPerf inference scores for Bert for very large language models like ChatGPT?

New @MLPerf inference results from us at @neuralmagic are now up! We show 1,000x performance improvements and 92% reduction in energy consumption. Dive into the blog to learn more: neuralmagic.com/blog/neural-ma… #mlops #mlperf #ml

With the Intel #oneAPI tools and optimized #AI frameworks #deeplearning inference and training saw significant performance gains on the latest Intel Xeon CPU Max Series. Learn more: intel.ly/3GwgRop #DL #CPU @MLPerf @TensorFlow @PyTorch @IntelAI

[News from the #STBlog: bddy.me/3VjhvM7] We recently joined @MLCommons and released new @MLPerf Tiny results. See why the benchmark helps advance machine learning at the edge. @TheKanter

So excited at #SC22 to see which team will win the @SCCompSC. Teams are working hard benchmarking #HPL #HPCG #IO500 #AI before the application workload kick off at the gala tonight. Thanks @MLPerf @MLCommons @grigori_fursin @OctoML for the #AI benchmark.

🚀 The results are in! 📊 @MLPerf Results Published For November 9th Benchmarking Rounds | via @weights_biases wandb.ai/telidavies/ml-…

United States Tendances

- 1. Panthers 15.9K posts

- 2. Browns 34.3K posts

- 3. Ole Miss 76.6K posts

- 4. Colts 18.8K posts

- 5. Puka 8,359 posts

- 6. Bryce Young 3,742 posts

- 7. Texans 15.2K posts

- 8. Stafford 11.3K posts

- 9. Lane Kiffin 92.9K posts

- 10. Forbes 16.4K posts

- 11. #KeepPounding 2,633 posts

- 12. Stefanski 3,163 posts

- 13. #DawgPound 4,209 posts

- 14. #FTTB 2,794 posts

- 15. Chelsea 415K posts

- 16. Pete Golding 5,600 posts

- 17. Reece James 85.5K posts

- 18. Arsenal 473K posts

- 19. #RamsHouse 1,330 posts

- 20. McLaren 135K posts

Vous pourriez aimer

-

MLCommons

MLCommons

@MLCommons -

Naveen Rao

Naveen Rao

@NaveenGRao -

Acer Japan (日本エイサー)

Acer Japan (日本エイサー)

@AcerJapan -

エルザ ジャパン

エルザ ジャパン

@ELSA_JAPAN -

Cerebras

Cerebras

@cerebras -

Crucial Memory

Crucial Memory

@CrucialMemory -

Semiconductor News by Dylan Martin

Semiconductor News by Dylan Martin

@DylanOnChips -

SC25

SC25

@Supercomputing -

Karl Freund

@karlfreund -

Graphcore

Graphcore

@graphcoreai -

DDN

DDN

@DDNintelligence -

株式会社オウルテック【公式】🍤

株式会社オウルテック【公式】🍤

@OwltechPR -

David Kanter

David Kanter

@TheKanter -

Jeff Boudier 🤗

Jeff Boudier 🤗

@jeffboudier -

Andrew Feldman

Andrew Feldman

@andrewdfeldman

Something went wrong.

Something went wrong.