HackAPrompt

@hackaprompt

Gaslight AIs & Win Prizes in the World's Largest AI Hacking Competition | Made w/ 💙 by the team @learnprompting

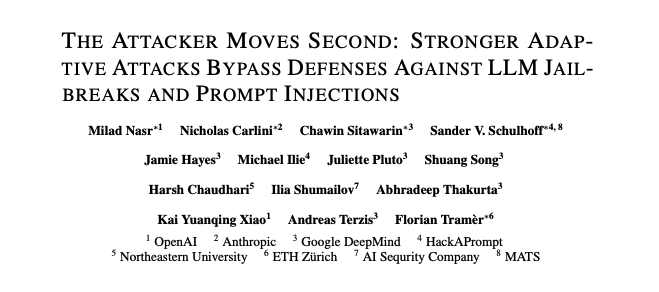

We partnered w/ @OpenAI, @AnthropicAI, & @GoogleDeepMind to show that the way we evaluate new models against Prompt Injection/Jailbreaks is BROKEN We compared Humans on @HackAPrompt vs. Automated AI Red Teaming Humans broke every defense/model we evaluated… 100% of the time🧵

PSA: our team is online 24/7 helping customers scale with Gemini 3 Pro and Nano Banana Pro, please let us know what you need (including higher API rate limits)! My email is [email protected]

This presents serious limitations that must be overcome before LLMs can be deployed broadly in security sensitive applications. Our work highlights the need for more robust evaluations of defenses, and continued research into effective mitigations.

Human attackers generally succeed within just a few queries, automated attacks under 1_000 queries (usually significantly so). Attacks remain not just possible, but affordable.

New paper by OpenAI, Anthropic, GDM & more, showing that LLM security remains an unsolved problem. -- We tested twelve recent jailbreak and prompt injection defenses that claimed robustness against static evals. All failed when confronted with human & LLM attackers.

Paper: arxiv.org/abs/2510.09023 Many thanks to @srxzr @csitawarin @hackaprompt @florian_tramer @aterzis @KaiKaiXiao @iliaishacked

We partnered w/ @OpenAI, @AnthropicAI, & @GoogleDeepMind to show that the way we evaluate new models against Prompt Injection/Jailbreaks is BROKEN We compared Humans on @HackAPrompt vs. Automated AI Red Teaming Humans broke every defense/model we evaluated… 100% of the time🧵

Human red-teamers could jailbreak leading models 100% of the time. What happens when AI can design bioweapons? * * * Most jailbreaking evaluations allow a single attempt, and the models are quite good at resisting these (green bars in graph). In this new paper, human…

take a seat, fuzzers the force is not strong with you yet

We partnered w/ @OpenAI, @AnthropicAI, & @GoogleDeepMind to show that the way we evaluate new models against Prompt Injection/Jailbreaks is BROKEN We compared Humans on @HackAPrompt vs. Automated AI Red Teaming Humans broke every defense/model we evaluated… 100% of the time🧵

You must design your LLM-powered app with the assumption that an attacker can make the LLM produce whatever they want.

We partnered w/ @OpenAI, @AnthropicAI, & @GoogleDeepMind to show that the way we evaluate new models against Prompt Injection/Jailbreaks is BROKEN We compared Humans on @HackAPrompt vs. Automated AI Red Teaming Humans broke every defense/model we evaluated… 100% of the time🧵

This competition and research confirms what we’re seeing in the wild at @arcanuminfosec . Automation can only get you part way to testing for AI security. Creative AI Red Teamers and Pentesters are still the most important players to identify AI security risks. Amazing…

We partnered w/ @OpenAI, @AnthropicAI, & @GoogleDeepMind to show that the way we evaluate new models against Prompt Injection/Jailbreaks is BROKEN We compared Humans on @HackAPrompt vs. Automated AI Red Teaming Humans broke every defense/model we evaluated… 100% of the time🧵

5 years ago, I wrote a paper with @wielandbr @aleks_madry and Nicholas Carlini that showed that most published defenses in adversarial ML (for adversarial examples at the time) failed against properly designed attacks. Has anything changed? Nope...

United States 트렌드

- 1. Auburn 34.5K posts

- 2. Bama 25.9K posts

- 3. Duke 26.3K posts

- 4. #SurvivorSeries 167K posts

- 5. Cam Coleman 1,473 posts

- 6. Iron Bowl 13.3K posts

- 7. Austin Theory 3,800 posts

- 8. Virginia 46.3K posts

- 9. #RollTide 4,993 posts

- 10. Ty Simpson 3,392 posts

- 11. ACC Championship 5,840 posts

- 12. Miami 109K posts

- 13. Ryan Williams 1,570 posts

- 14. Seth 20.3K posts

- 15. DeBoer 4,543 posts

- 16. Stockton 9,508 posts

- 17. Lane Kiffin 38.6K posts

- 18. Ole Miss 30.8K posts

- 19. Horton 3,427 posts

- 20. Vandy 18K posts

Something went wrong.

Something went wrong.