#pytorch search results

Got a #PyTorch breakthrough to share? 🎤 The #CallForProposals for #PyTorchCon North America (Oct 20-21 | San Jose) is in full swing. Submit your technical session idea by June 7! Apply: bit.ly/4bIgqbs

From leading the #PyTorch transition to the @linuxfoundation to spearheading the Llama #OpenSource strategy, Joe Spisak has defined the #AI landscape. 🚀 Join him at #PyTorchCon Europe in Paris to discuss the future of collaborative AI! 📍 Paris | 7-8 April Details:

Join us in San Jose for #PyTorchCon North America! 🚀 Secure your spot for Oct 20-21 & save $400 with our early bird rates. Don’t miss the premier event for the #PyTorch community. Register now: bit.ly/4sh3DSw

Bring the power of #GoogleColossus to #PyTorch with Rapid Bucket + fsspec (GCSFS). 🔹 4.8x faster reads 🔹 2.8x faster writes 🔹 23% faster total training time with PyTorch Lightning Keep your GPUs fed and your workloads moving. Learn more: goo.gle/4945HpX

Heading to #NVIDIAGTC next week? Let’s talk @PyTorch. 🚀 We’re bringing the community to San Jose. Drop by Booth #338 to meet expert developers and core maintainers in person. Scaling, inference, foundation models, and OSS contributions. Full schedule below 👇 #PyTorch

MXFP8 training for MoEs on GB200s enables a 1.3x speedup with equivalent convergence versus BF16. This #PyTorch update via TorchAO and TorchTitan on Crusoe Cloud details gains from dynamically quantized grouped GEMMs. #AI #OpenSource 🔗 pytorch.org/blog/mxfp8-tra… ✍️ @vega_myhre,

🚀 It all starts tomorrow! #PyTorchCon Europe 7–8 April | Paris Two days of #ML innovation, community & #PyTorch breakthroughs. 🎟 It's not too late to join us: bit.ly/4bUWj91

Big update to #Monarch, our distributed programming framework for #PyTorch! Since its launch at the #PyTorchCon NA in October, the team has shipped Kubernetes support, RDMA on AWS EFA and AMD ROCm, distributed SQL-based telemetry, a terminal UI, and dashboards for live job

#ExecuTorch addresses fragmented native deployment for #AI agents as a #PyTorch native platform. It enables voice models across CPU, GPU, and NPU on Android, iOS, Linux, macOS & Windows 🔗 pytorch.org/blog/building-…

🚀 Put your brand in front of the #PyTorch community. Sponsor #PyTorchCon Europe, 7–8 April in Paris, and connect with the researchers, engineers & #ML leaders building the next generation of #AI. Showcase your tech. Meet top talent. Build real partnerships. 🤝 Explore

1️⃣ week until #PyTorchCon Europe! 🇫🇷 Paris becomes the home of #PyTorch for two days of #ML breakthroughs & community from 7-8 April. Check out schedule: bit.ly/3PpSktm. 🎟 Join us! bit.ly/4bUWj91

Training large-scale MoE models just got easier -- with NVIDIA NeMo Automodel, you can train billion-parameter MoE models directly in #PyTorch using built-in GPU optimizations. ✅ Open source ✅ 200+TFLOPs/GPU Read more in our technical blog: developer.nvidia.com/blog/accelerat…

It’s here! 💥 #PyTorchCon Europe starts TODAY in Paris. Let’s build, learn, and celebrate the #PyTorch community together. 🎟 Join us: bit.ly/4bUWj91

The #GenAI & Multimodal track at #PyTorchCon Europe explores building & scaling generative & multimodal models using #PyTorch. Learn more: hubs.la/Q046Lxy40 🎟 Register → hubs.la/Q046LfC90

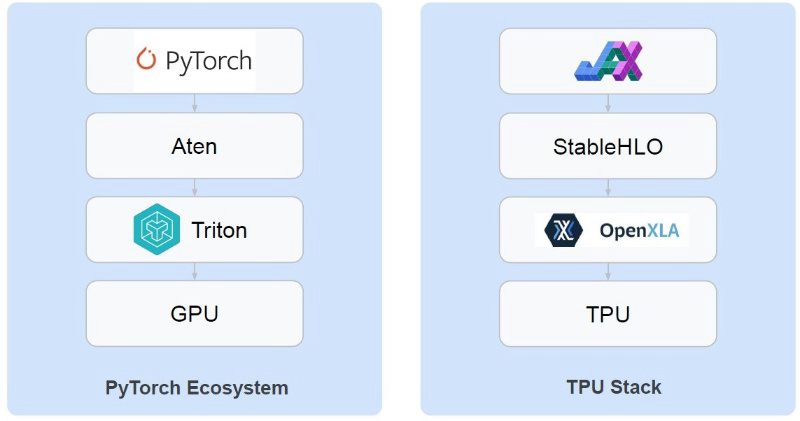

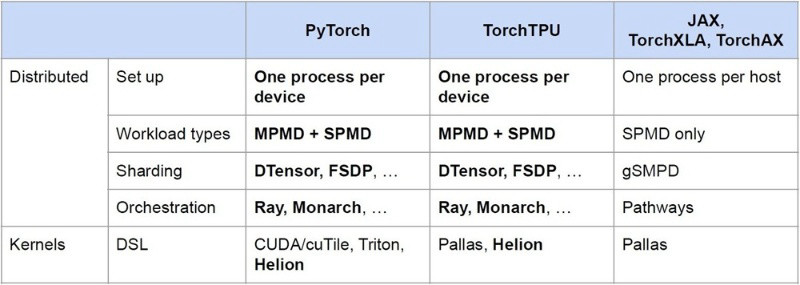

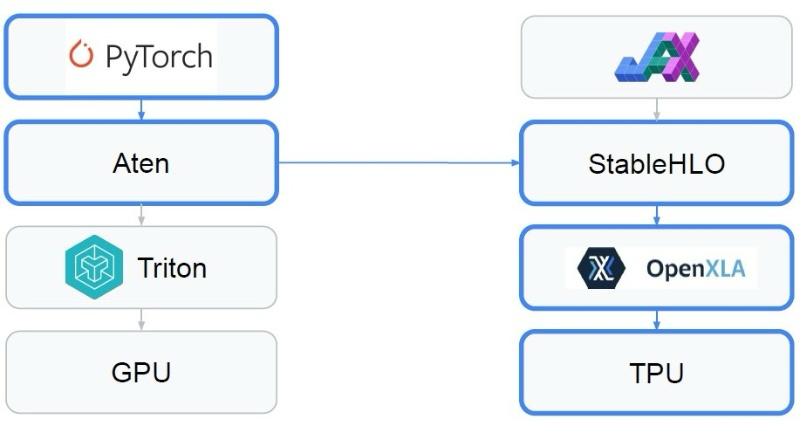

A new PyTorch-native backend is coming to unlock the power of Google TPUs: ✨ Run existing PyTorch with minimal code changes. ✨ Get a 50-100%+ performance boost with Fused Eager mode. Read the engineering deep dive here: goo.gle/4vbTQQl #TorchTPU #PyTorch #MLOps #AI

🔴 PyTorch SMG: CPU/GPU disaggregation → 3.5× LLM throughput. Llama 3.3 70B FP8. Prod: GCP, Oracle, Alibaba. 24-ai.news/en/news/2026-0… #PyTorch #LLMInference

Just shipped a full end-to-end edge deployment of ResNet-50 PyTorch → ONNX → ExecuTorch Real-time vision running efficiently on the edge. No cloud. Low latency. Production-ready pipeline. Read the complete guide here: anubhutiailabs.substack.com/p/end-to-end-e… #EdgeAI #ResNet #PyTorch

#PyTorch Lightning and #Intercom-client Hit in #Supply_Chain_Attacks to #Steal #Credentials buff.ly/557NIxP

🔴 PyTorch SMG: CPU/GPU disaggregation → 3.5× LLM throughput. Llama 3.3 70B FP8. Prod: GCP, Oracle, Alibaba. 24-ai.news/en/news/2026-0… #PyTorch #LLMInference

Day 1 of my Deep Learning journey ✔️ Started with PyTorch 🔥 • What is PyTorch • Who created it • Timeline & evolution • Features • PyTorch vs TensorFlow Beginning my DL journey 🚀 #DeepLearning #PyTorch #LearningInPublic

#セキュリティ #PyTorch #マルウェア 医療AI開発で PyTorch 使ってるなら要確認。トレーニング環境が汚染されると、患者データが漏洩する可能性も。サプライチェーン汚染は本当に危ない‼︎

Got a #PyTorch breakthrough to share? 🎤 The #CallForProposals for #PyTorchCon North America (Oct 20-21 | San Jose) is in full swing. Submit your technical session idea by June 7! Apply: bit.ly/4bIgqbs

Machine Learning with PyTorch and Scikit-Learn Link - amzn.to/4tBqr0y #MachineLearning #Python #PyTorch #DeepLearning #AI #programming

Once you build it from scratch first, the PyTorch version finally makes sense.Still a long way to go, but this tutorial removed a lot of the confusion. Next up: Transfer Learning for Computer Vision.#PyTorch #MachineLearning #BuildInPublic

🟡 PyTorch AutoSP: compiler in DeepSpeed auto-converts transformer code to sequence-parallel for 100k+ tokens. Tested 8× A100. 24-ai.news/en/news/2026-0… #PyTorch #LLM

MarketSonarはWindows上で動作する無料のチャート分析ソフトです。 ・銘柄を次々パッパ、パッパと切替えながら閲覧可能 ・豊富なインジケータ ・強力な検索機能 ・Pythonとの連携機能 marketsonar2.wixsite.com/marketsonar/ #チャート #テクニカル分析 #pytorch #keras

Fix PyTorch bottlenecks with profiling, not just more compute. 📊 High DataLoader time = I/O limits. 🧠 High MemCpy = inefficient tensor creation. Get the guide: na2.hubs.ly/H05b2P50 #MLOps #PyTorch

🤯[NEW VIP COURSE] Next-Gen AI: Deep Reinforcement Learning in #PyTorch GET 75% OFF: deeplearningcourses.com/c/deep-reinfor… #artificialintelligence #deeplearning #datascience #machinelearning

Bring the power of #GoogleColossus to #PyTorch with Rapid Bucket + fsspec (GCSFS). 🔹 4.8x faster reads 🔹 2.8x faster writes 🔹 23% faster total training time with PyTorch Lightning Keep your GPUs fed and your workloads moving. Learn more: goo.gle/4945HpX

Starting my ML/DL journey — learning in public. Built my first Linear Regression model using PyTorch (nn module) to understand how models learn. 🔗 Project: github.com/NeuroDeepDev/D… Open to feedback on my code and approach. #MachineLearning #PyTorch #LearnInPublic

#Meta #PyTorch dominates #AI #research via #NVIDIA #CUDA, while #Google #TPUs lagged due to #XLA friction. #TorchTPU removes these barriers with #MPMD support and #Eager Modes, enabling smoother PyTorch execution on #TPUs. #GoogleCloudNext2026

#Meta #PyTorch became widely adopted for its easy‑to‑debug Eager Mode, strong Python integration, and tight coupling with #NVIDIA #CUDA, while #Google’s #TPU struggled due to low PyTorch compatibility requiring XLA and code rewrites.

Next up? Before letting torch.nn do the heavy lifting, my goal is to build a neural network completely from scratch. Time to code the raw math and truly understand the mechanics under the hood. #PyTorch #MachineLearning #100xDevs #LearningInPublic

Built at the @Scaler_SST AI Hackathon Finals (@Meta × @PyTorch × @huggingface) GPU Budget Negotiation Arena — an OpenEnv-style multi-agent RL environment for compute-market negotiation... Space: huggingface.co/spaces/abhinav… #OpenEnv #Meta #PyTorch #HuggingFace #AI #RL #LLM

linktr.ee/learnbydoingwi… #learnbydoingwithsteven #PyTorch #AIEfficiency #LLM #DeepLearning #StanfordCS336 #MachineLearning #AIOptimization #H100 #GPU

🔄 GitHub Trending (Refresh) TorchCode: LeetCode for PyTorch. Practice implementing softmax, attention, GPT-2 and more from scratch with instant auto-grading. Jupyter-based, 40 problems, no GPU required. Self-host or try online. 3,580 stars #PyTorch #MachineLearning #Education

#PyTorchCon Europe 2026 #CallForProposals is open! 🎤 Submit to speak by 8 February for sessions at the Paris event, 7-8 April. Share your #PyTorch insights on research, applications, tooling, best practices, performance, community building, or innovative use cases. ➡️ Submit

January PyTorch newsletter is out. Get updates on #PyTorchConferenceEurope CFPs, #PyTorchDayIndia registration, a 2025 year-in-review, and new technical blogs on scalable RL, FlexAttention, and more. Read: hubs.ly/Q03__YVW0 Subscribe: hubs.ly/Q03__ZV30 #PyTorch

PyTorch at the micro-edge? Yes. See how ExecuTorch brings PyTorch models to Arm microcontrollers—quantized, compiled, and running on a Corstone-320 + Ethos-U NPU (via FVP). 🔗 hubs.la/Q045FHpT0 From training to deployment, end to end. #PyTorch #ExecuTorch #EdgeAI #TinyML

📣 ICYMI: #PyTorchDayIndia is coming to Bengaluru on 7 February! Register NOW & reserve your seat for a full day of #PyTorch technical talks, discussions & networking with #AI & #ML leaders ➡️ hubs.la/Q03-s0qv0

MXFP8 training for MoEs on GB200s enables a 1.3x speedup with equivalent convergence versus BF16. This #PyTorch update via TorchAO and TorchTitan on Crusoe Cloud details gains from dynamically quantized grouped GEMMs. #AI #OpenSource 🔗 pytorch.org/blog/mxfp8-tra… ✍️ @vega_myhre,

🎯 Secure your spot at early bird rates! #PyTorchDayIndia is going live 7 February in Bengaluru. Dive deep into #PyTorch with industry experts, cutting-edge demos & real-world #AI applications. Limited early bird pricing available until 31 January ➡️ hubs.la/Q03_bJhM0

We're excited to announce that @nota_ai has joined PyTorch Foundation as a Silver Member to advance open source AI 🎉 Nota AI will support the PyTorch community with model compression, quantization, and hardware-aware optimization, from edge to cloud. #PyTorch #OpenSourceAI

The Triton team at Meta shares a working implementation of warp specialization in the #Triton compiler, along with the current design and upcoming roadmap, and invites community feedback from the #PyTorch and open source AI community. 🔗hubs.la/Q03-cD2J0 #AIInfrastructure

New to the PyTorch Ecosystem Landscape: Kubetorch. Kubetorch enables ML research and development on Kubernetes across training, inference, RL, evals, data processing, and more, in a simple and unopinionated package. Learn more: hubs.la/Q0453qFd0 #PyTorch #Kubernetes

#PyTorch 2.10 includes updates focused on performance and numerical debugging. Next week, Andrey Talaman, Nikita Shulga, and Shangdi Yu (Meta) will provide a brief update on the release and answer questions in a live Q&A. Topics include TorchScript deprecation, torch.compile

A new PyTorch-native backend is coming to unlock the power of Google TPUs: ✨ Run existing PyTorch with minimal code changes. ✨ Get a 50-100%+ performance boost with Fused Eager mode. Read the engineering deep dive here: goo.gle/4vbTQQl #TorchTPU #PyTorch #MLOps #AI

⏳ 3 days left to save! Spend only €449 on your #PyTorchCon Europe ticket if you regsiter by 27 February. Join researchers, developers, and #AI engineers from 7-8 April in Paris and help shape #PyTorch in production. Schedule: hubs.la/Q044yF_z0 Register:

Scaling RL for LLMs is hard. The #PyTorch team at Meta open sourced torchforge and shares #ReinforcementLearning lessons from evaluating it with Weaver on a 512 GPU cluster. 🔗 hubs.la/Q03-fZs_0

#ExecuTorch addresses fragmented native deployment for #AI agents as a #PyTorch native platform. It enables voice models across CPU, GPU, and NPU on Android, iOS, Linux, macOS & Windows 🔗 pytorch.org/blog/building-…

Big update to #Monarch, our distributed programming framework for #PyTorch! Since its launch at the #PyTorchCon NA in October, the team has shipped Kubernetes support, RDMA on AWS EFA and AMD ROCm, distributed SQL-based telemetry, a terminal UI, and dashboards for live job

FlexAttention has been adopted across popular #LLM ecosystem projects, including Hugging Face, vLLM, and SGLang, reducing the effort required to adapt and experiment with newer attention variants in modern LLMs. 🔗 Read our latest blog from @Intel #PyTorch & Triton Teams:

#DeepLearning with #PyTorch Step-by-Step Beginner's Guides (3 volumes) Vol.1 (Fundamentals) and 2 (Computer Vision): amzn.to/3TWr8SL Vol.3 (Sequences and #NLProc): amzn.to/49bh5h2 ———— #MachineLearning #ML #AI #DataScience #DataScientist #Python #ComputerVision

Something went wrong.

Something went wrong.

United States Trends

- 1. Spirit N/A

- 2. New Day N/A

- 3. Kofi N/A

- 4. Kentucky Derby N/A

- 5. Crude Velocity N/A

- 6. Ask Jeeves N/A

- 7. Dagen N/A

- 8. Big E N/A

- 9. Jake Bird N/A

- 10. Elizabeth Warren N/A

- 11. Saka N/A

- 12. Jeff Cobb N/A

- 13. Cody Bellinger N/A

- 14. Bradish N/A

- 15. Fulham N/A

- 16. Pocahontas N/A

- 17. #ARSFUL N/A

- 18. #Its_SevEN_In_This_World N/A

- 19. #COYG N/A

- 20. Gyokeres N/A