#semantic_inference ผลการค้นหา

Hindsight is 20/20, but this is perhaps a contributing factor for why robust estimators of epistemic/reducible uncertainty (and interpretability-by-exemplar) emerged from NLP/computational linguistics research for the models with non-identifiable parameters (e.g., neural…

When I say co occurrence it’s not really clear but what I mean is any sequence of things that occur. Users who buy diapers tend to buy beer. They are semantically unrelated but husbands who shop tend to use the opportunity to buy beer. A pure semantic model has no prayer of…

They absolutely do Infer. they dont reason. Inference very specifically is defined as the process of reaching a conclusion through evidence OR reasoning. Taking an input context, and applying pattern matching compressed into the weights from the dataset to that IS inference

This is precisely why I have been developing a form of semiotic engineering Once we hit the ceiling for how smart these models can be, and we learn what products we need, its going to come back down to efficiency and optimization of resources. Inference types become their economy

Pragmatics integration: Formal semantics incorporates implicatures and context (Gricean maxims) to resolve ambiguities, acknowledging that reason's "absolute principles" are context-bound, not universal absolutes.

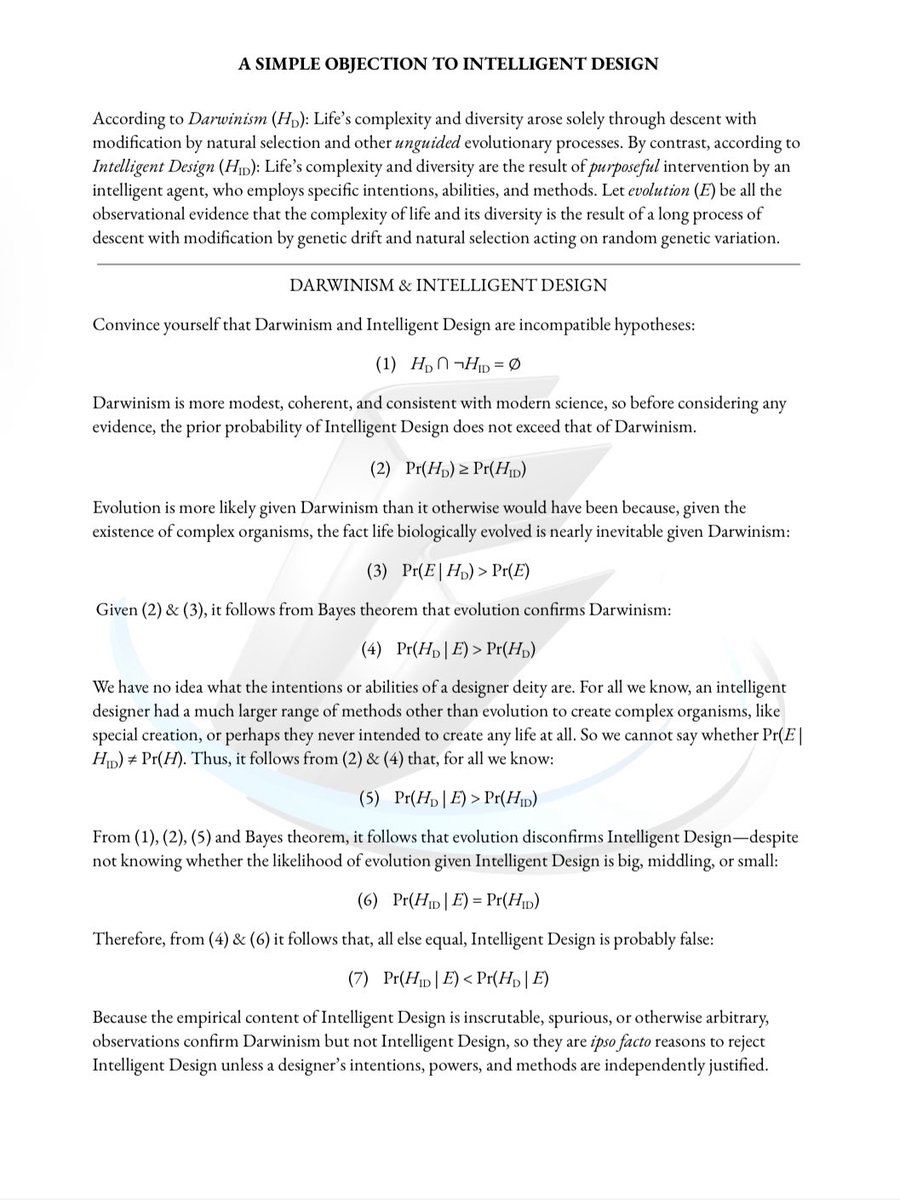

If the design inference abandons an inductive generalization for a likelihood, we have no idea whether an unembodied mind would create human limbs and trees with the features they have. There is no way of independently knowing whether such a likelihood is big, middling, or small.

The reasoning uses complex-system triangulation to refine layered causal predictions gSenti

With the Google leak, Creative Logical Inference is the only method available to map concepts (like “Effort”) to their likely machine-readable counterparts (like [contentEffort]).

Pretty much, and the "after the fact" semantics (inductive) differ substantially from those of homologically prioritized semantics (deductive) in a manner that is qualitatively quantifiable in form and function. Words seem to matter because we're made of them one way or another.

In LLMs, "inference" means using a trained model to generate outputs, like responses to your queries in ChatGPT. It's the runtime phase where the model processes inputs and predicts results. Unlike training (learning from data), inference is ongoing and scales with user demand,…

???? "inference involves using existing information to make an educated guess or a likely deduction about something that isn't stated directly" I "assumed" a statement was to make me reflect on things like I've listed above.

👇 We challenge this interpretation. SAM2 pretraining, instance matching across views, parallels self-supervised objectives known to induce semantic structure. If true, semantics should be: i) easily disentangled via adapters, ii) learnable from base classes and generalizable.

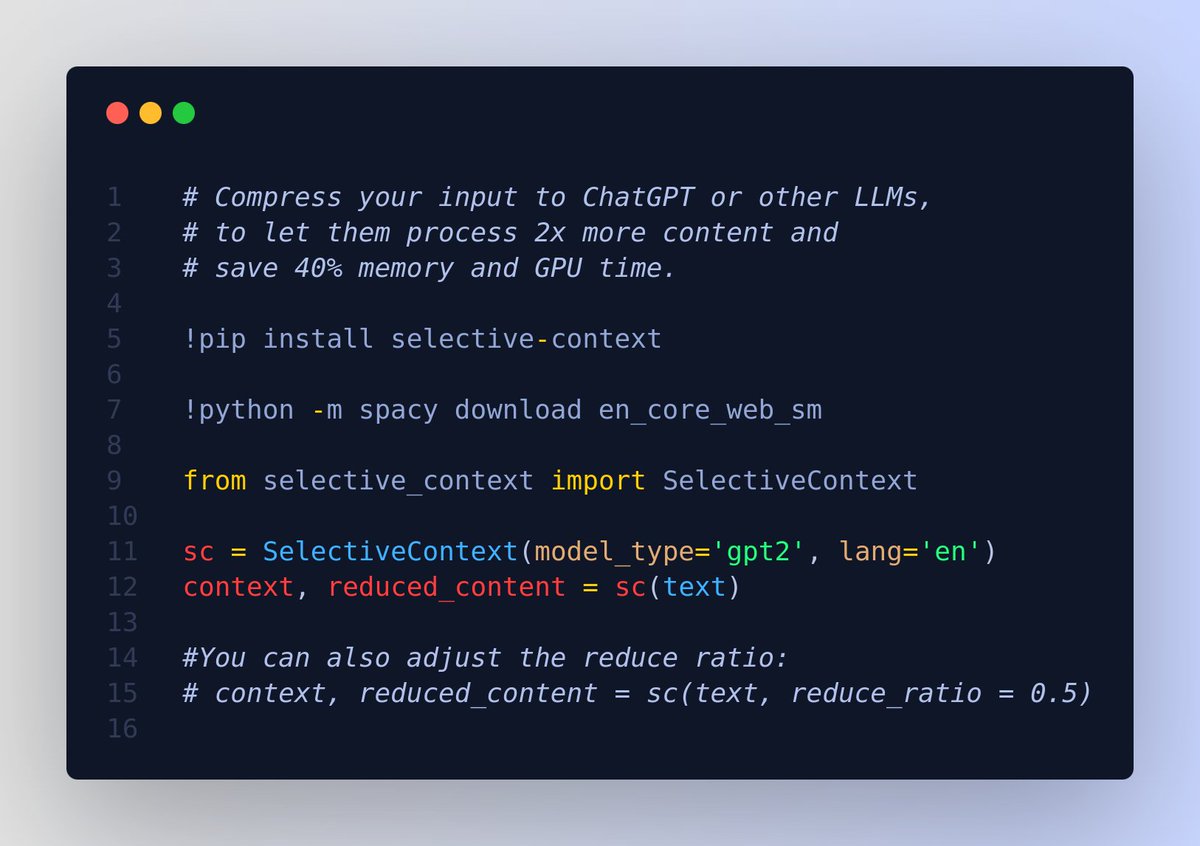

A cool repo - Compress your input to ChatGPT or other LLMs, to let them process 2x more content. 📌 Under the hood, its implementing this paper - "Compressing Context to Enhance Inference Efficiency of Large Language Models" 📌 They key idea revolves around self-information…

We solved *Semantic Search-as-you-Type* ⌨️ It is faster than embedding inference ⚡ It is Open-Source 👐 It is in Rust 🦀 We use it for our docs 📜 Read more how 👇

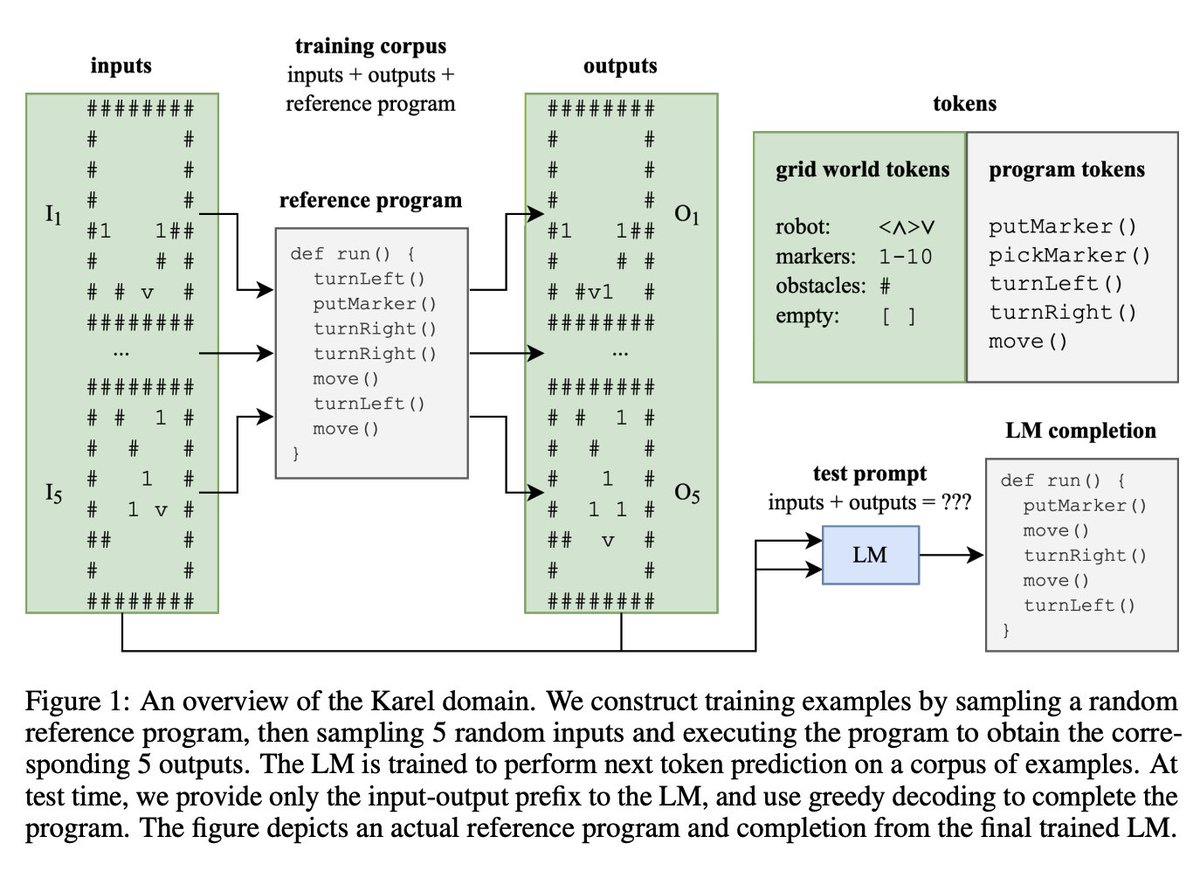

Evidence of Meaning in Language Models -Train LM w/ next token prediction on corpus of programs -Precisely defining concepts of correctness, semantics -Model learns to generate correct programs shorter & semantically different than those in its training arxiv.org/abs/2305.11169

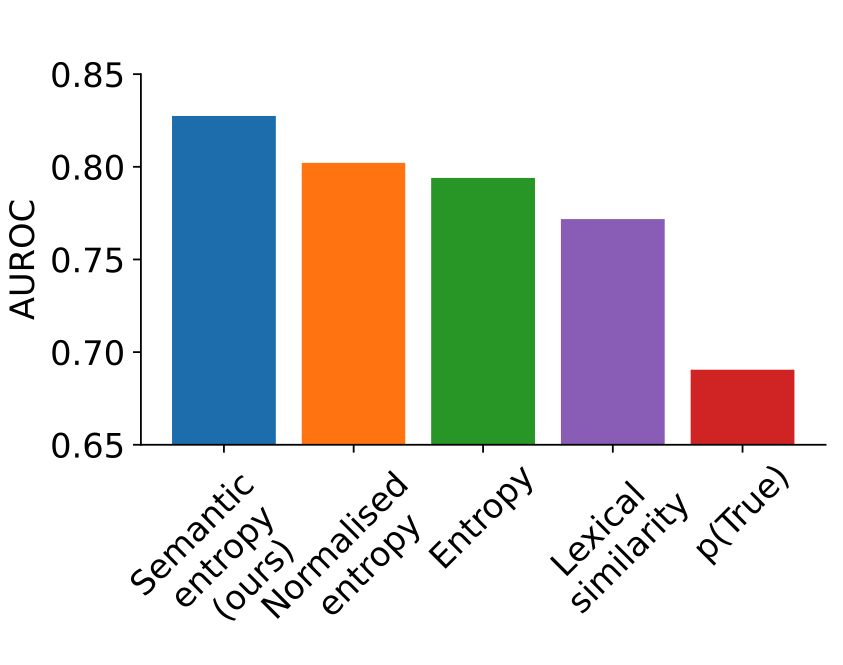

Measuring Uncertainty in LLMs -Hard to know when to trust LLMs -Hard to measure uncertainty in natural lang b/c diff words can mean same thing -By considering shared meanings "Semantic Entropy" more predictive of LLM accuracy -Works out-of-the-box Paper arxiv.org/abs/2302.09664

“Semantic” is when words are closely related in meaning. Again, you’re wrong. You definitely should be writing IELTS.

Inference isn't really a skill. There's no part of the brain that does your inferring for you. Either you have sufficient conceptual and real-world knowledge to 'see' a meaning or you don't. Spend less time practising inference and more time teaching concepts and background...

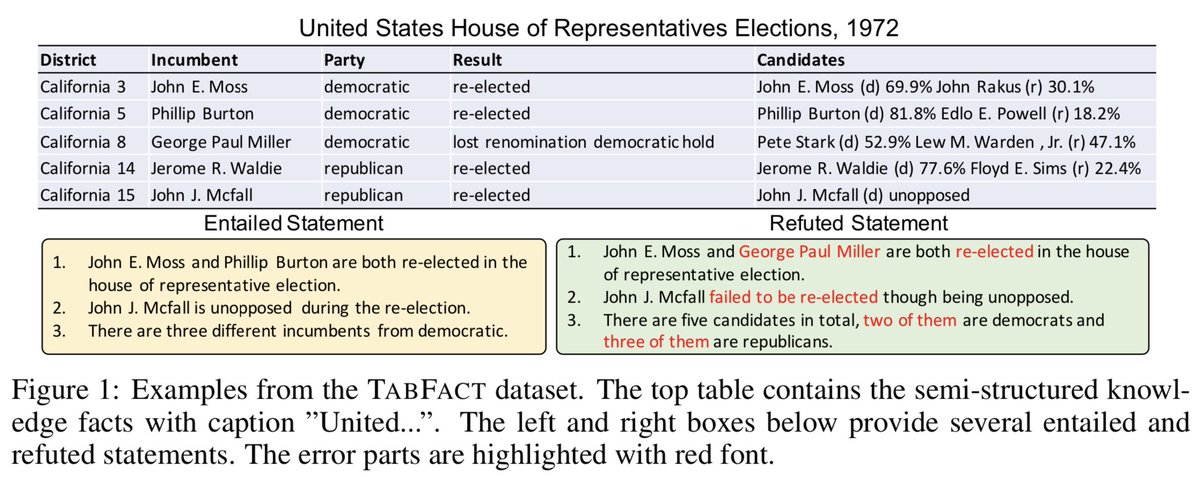

Natural Language Inference (NLI) over tables by @WilliamWangNLP et al. arxiv.org/abs/1909.02164 Tables are a ubiquitous but little studied human information source stuck between text and structured data—though see semantic parsing work, e.g., aclweb.org/anthology/P15-… by @IcePasupat

Something went wrong.

Something went wrong.

United States Trends

- 1. Good Sunday 58.7K posts

- 2. Stockton 28.2K posts

- 3. #ViratKohli 47.7K posts

- 4. #BNewEraBirthdayConcert 1.15M posts

- 5. Auburn 41.6K posts

- 6. #sundayvibes 3,730 posts

- 7. Bama 30K posts

- 8. #INDvSA 75.4K posts

- 9. Duke 33.3K posts

- 10. #JimmySeaFanconD2 353K posts

- 11. PERTHSANTA LUMINOUS SKIN 356K posts

- 12. Crystal Palace 20K posts

- 13. BECKY BIRTHDAY CONCERT 1.07M posts

- 14. Notre Dame 26.3K posts

- 15. Lane Kiffin 49.4K posts

- 16. Ewing 1,395 posts

- 17. Stanford 10.2K posts

- 18. Austin Theory 5,636 posts

- 19. Advent 26.5K posts

- 20. Jericho 5,207 posts