#tensorflow search results

Want to build your first #TensorFlow model, but not sure where to start? In this tutorial, you’ll: → Load and explore a dataset → Build and train your model → See what actually improves accuracy ▶️ Watch the full video by @iuliaferoli: youtu.be/nswGrvOhaOY

Built an IMDB sentiment classifier using Embedding + SimpleRNN in TensorFlow 🎬🧠 ~98.8% train acc, ~80–85% val acc (observed overfitting). Next: Dropout, EarlyStopping & LSTM 🚀 Code:github.com/SayliThukral/D… #DeepLearning #NLP #TensorFlow #Python

Training your first #TensorFlow model isn’t really about the model – it’s about what you learn from it. A simple example: Train two models on the same data. Model A: ~88% accuracy Model B: Slightly better, but ~50% longer training That’s your first real ML trade-off: is a small

Built an IMDB sentiment classifier using Embedding + SimpleRNN in TensorFlow 🎬🧠 ~98.8% train acc, ~80–85% val acc (observed overfitting). Next: Dropout, EarlyStopping & LSTM 🚀 Code:github.com/SayliThukral/D… #DeepLearning #NLP #TensorFlow #Python

Just trained a Neural Network on MNIST using TensorFlow + Keras 🧠 Flatten → Dense(128, ReLU) → Dense(10, Softmax) ✅ Normalized data ✅ Adam optimizer ✅ Model saved & reloaded Full notebook 👇 github.com/victorjanni/fa… #MachineLearning #Python #TensorFlow

Training your first #TensorFlow model isn’t really about the model – it’s about what you learn from it. A simple example: Train two models on the same data. Model A: ~88% accuracy Model B: Slightly better, but ~50% longer training That’s your first real ML trade-off: is a small

隠し要素)セルフィーモード インカメラに映った人のシルエットが、 影となってサイトに登場します。 影の映画が始まりしばらくすると出てくる 謎の物体をクリックするとスタートです。 スマホでも可能ですが、 PCでの体験をおすすめします。 #WebGL #Threejs #TensorFlow

高崎卓馬さん( @takumantakuman )と 矢花宏太さんが設立した会社、 WRITING & DESIGN のサイトを 企画・制作しました。 ものづくりへのお二人の実直な姿勢を そのまま体現するサイトを目指しました。 wd-inc.jp CD, AD, De:イム ジョンホ @junim PL, AD, TD:岡部 健二 @kenjiokabe .

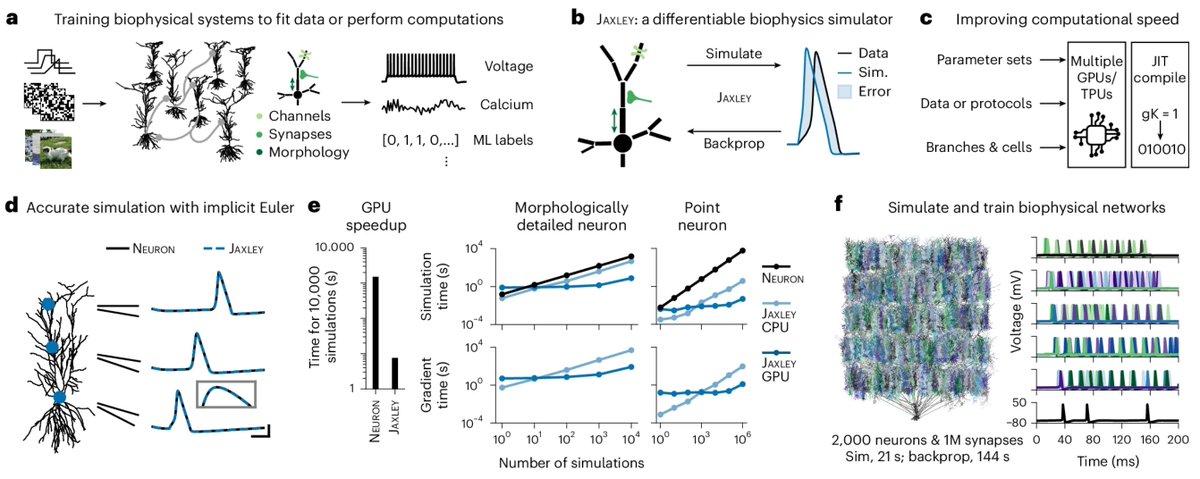

Behold Jaxley: differentiable simulator for biophysical neuron models, written in the Python library #JAX, because we needed something more than #tensorflow. Imagine a sweet RNN models with Hodgkin–Huxley-type neurons 🧠 nature.com/articles/s4159… #neuroAI

Something went wrong.

Something went wrong.

United States Trends

- 1. #WrestleMania N/A

- 2. Luke Kennard N/A

- 3. Bengals N/A

- 4. Lakers N/A

- 5. #LakeShow N/A

- 6. Rockets N/A

- 7. Giants N/A

- 8. Sengun N/A

- 9. Porter Martone N/A

- 10. Dexter Lawrence N/A

- 11. Orton N/A

- 12. #IgniteTheOrange N/A

- 13. Flyers N/A

- 14. Bianca N/A

- 15. Pat McAfee N/A

- 16. #UFCWinnipeg N/A

- 17. #Toonami N/A

- 18. Paige N/A

- 19. Ayton N/A

- 20. Crosby N/A