Side effect of blocking Chinese firms from buying the best NVIDIA cards: top models are now explicitly being trained to work well on older/cheaper GPUs. The new SoTA model from @Kimi_Moonshot uses plain old BF16 ops (after dequant from INT4); no need for expensive FP4 support.

🚀 "Quantization is not a compromise — it's the next paradigm." After K2-Thinking's release, many developers have been curious about its native INT4 quantization format. 刘少伟, infra engineer at @Kimi_Moonshot and Zhihu contributor, shares an insider's view on why this choice…

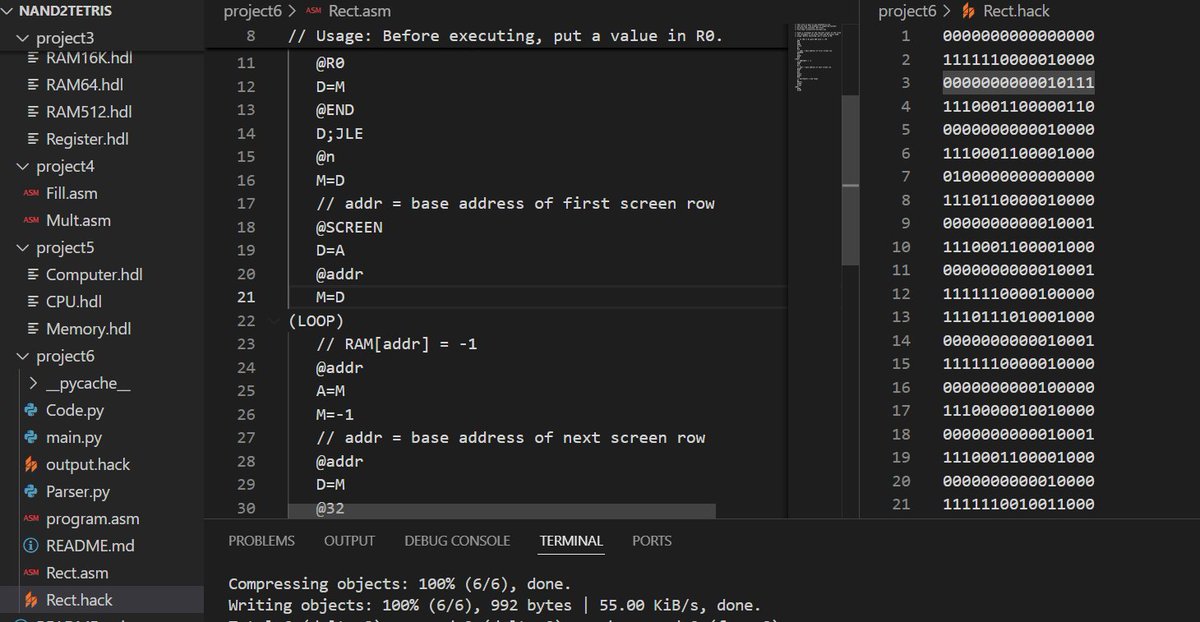

Finally wrapped up the hardware half of Nand2Tetris, took me a week. It had been on my to-do list for way longer than I’d like to admit.

Deepseek engineers so cracked they bypassed cuda

United States 趋势

- 1. Duke 32.8K posts

- 2. Auburn 41K posts

- 3. Stockton 25.3K posts

- 4. Bama 29.8K posts

- 5. Miami 137K posts

- 6. Ole Miss 38.6K posts

- 7. Lane Kiffin 48.7K posts

- 8. Notre Dame 25.9K posts

- 9. Stanford 9,977 posts

- 10. #SurvivorSeries 191K posts

- 11. #JimmySeaFanconD2 198K posts

- 12. Austin Theory 5,326 posts

- 13. Virginia 48.7K posts

- 14. #BNewEraBirthdayConcert 690K posts

- 15. #INDvSA 35.8K posts

- 16. PERTHSANTA LUMINOUS SKIN 275K posts

- 17. Cam Coleman 2,048 posts

- 18. Ewing 1,311 posts

- 19. #NIVEASkinGlowxPerthSanta 319K posts

- 20. BECKY BIRTHDAY CONCERT 679K posts

Something went wrong.

Something went wrong.