#distillation search results

Need to get up to speed on #distillation? AIChE Academy's new course by Daniel Summers will help you understand your tower's operational range, teach you to design packed or trayed towers, and provide #troubleshooting insights and distillation #safety. bit.ly/4adaG9m

🖼️🖼️ #Distillation-Based Cross-Model Transferable Adversarial #Attack for Remote Sensing #Image #Classification ✍️ Xiyu Peng et al. 🔗 brnw.ch/21x1Wme

This is the most technological paper I have been involved in for a while. It presents a below-threshold error reduction in single photons through photon #distillation. While it is not focused on #quantumerrorcorrection, it should facilitate it for integrated #photonic platforms.

Kister #Distillation Symposium returns to #AIChE Spring Meeting, April 6-10, Dallas bit.ly/4hNBNHX #AIChESpring

16M+ fake exchanges, 24,000 fake accounts: #Anthropic alleges Chinese AI firms used #distillation attack on #Claude to train their own #AImodels in record time, cutting corners on safety. Could this threaten #AI #security? medianama.com/2026/02/223-an…

This is hilarious 🤣 Anthropic is now complaining others are training models on their data, pot meets kettle!! #Antropic #Distillation #LLMTraining

We’ve identified industrial-scale distillation attacks on our models by DeepSeek, Moonshot AI, and MiniMax. These labs created over 24,000 fraudulent accounts and generated over 16 million exchanges with Claude, extracting its capabilities to train and improve their own models.

Video: Hear from Henry Kister on what's ahead at the Kister #Distillation Symposium at AIChE’s #GCPS and #AIChESpring in Dallas, April 6-10. bit.ly/4hNBNHX #ProcessSafety #chemicalengineering

Correctness-Aware Knowledge Distillation for Enhanced Student Learning Ishan Mishra, Deepak Mishra, Jinjun Xiong. Action editor: Dmitry Kobak. openreview.net/forum?id=XpRXm… #learns #distillation #mentor

#Claude learnt everything it knows from what humans wrote, created and shared ( mostly without permission) and now they're crying wolf about #Distillation by others? Irony just deployed an AI Agent to commit suicide! 😂😂😂😂😂

Efficient Distillation of Classifier-Free Guidance using Adapters Cristian Perez Jensen, Seyedmorteza Sadat. Action editor: Chang Xu. openreview.net/forum?id=uMz8F… #distillation #guided #models

Hear from Henry Kister on what's ahead at the Kister #Distillation Symposium at AIChE’s #GCPS and #AIChESpring in Dallas, April 6-10. See full video and blog post: bit.ly/4hNBNHX #ProcessSafety

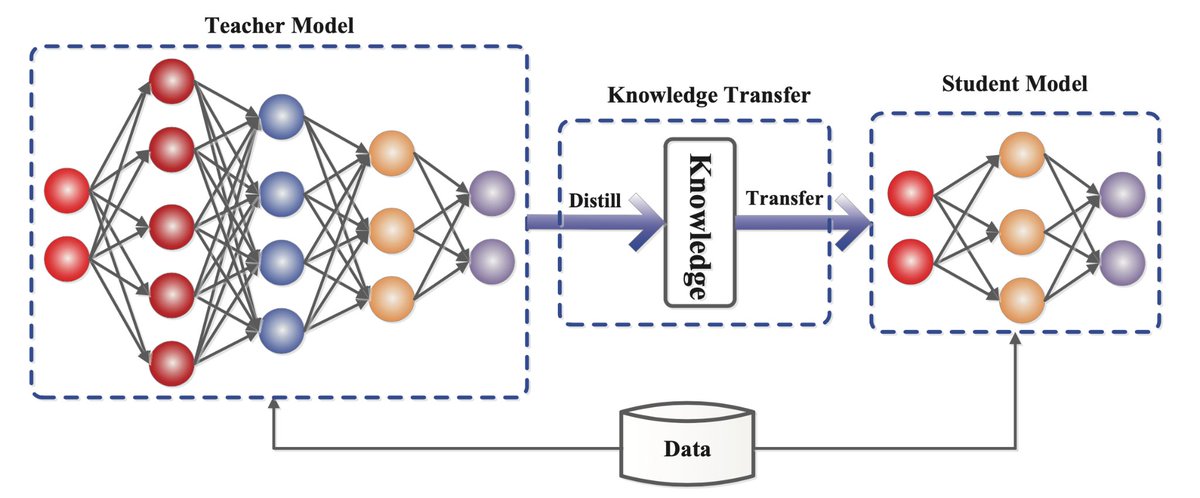

Model distillation & quantization: big model → small model. GPT-4 (1.8T) → GPT-4o (200B). Performance down 5%, cost down 80%. Worth it? Most scenarios: yes. Core scenarios: no. #AI #Distillation #Optimization

Efficient Knowledge Injection in LLMs via Self-Distillation Kalle Kujanpää, Pekka Marttinen, Harri Valpola, Alexander Ilin. Action editor: Alessandro Sordoni. openreview.net/forum?id=drYpd… #distillation #retrieval #leveraging

What we built🏗️, shipped🚢 and shared🚀 last week: SuperNova: Distillation of Llama 3.1 🛣️ Learn LLM Engineering, step-by-step: 🚉 SFT 👍 DPO 🥪 Merging ⚗️ Distillation 🚇 Pretraining Ready? Start here: youtube.com/watch?v=dCU5Ox… #SLM #Distillation #ArceeSuperNova

Distilled Circuits: A Mechanistic Study of Internal Restructuring in Knowledge Distillation Reilly Haskins, Benjamin Adams. Action editor: Ehsan Amid. openreview.net/forum?id=S1KJE… #distillation #similarity #distilgpt2

LumiNet: Perception-Driven Knowledge Distillation via Statistical Logit Calibration Md. Ismail Hossain, M M Lutfe Elahi, Sameera Ramasinghe et al.. Action editor: Manzil Zaheer. openreview.net/forum?id=3rU1l… #distillation #distill #feature

GREECE – Let’s take a look into the #Smyrlakis brothers’ family business of more than 20 years, Roots, and their rich history in #distillation in #Greece. thenationalherald.com/a-spotlight-on…

🍇🍇Sur le salon de l’agriculture, le commissaire européen à l’agriculture a annoncé le déblocage d’une enveloppe de 40 millions d’euros prise sur la réserve de crise européenne pour financer une campagne de distillation. #viticulture #distillation dlvr.it/TR8yXr

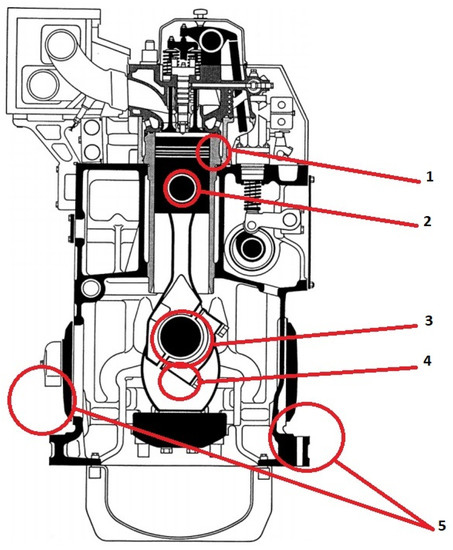

✨ #HighlyCitedPaper Study of the Relationship between the Level of #LubricatingOil Contamination with #Distillation #Fuel and the Risk of #Explosion in the Crankcase of a #Marine Trunk Type #Engine 👉 brnw.ch/21wXGFN #mdpienergies #openaccess

🖼️🖼️ #Distillation-Based Cross-Model Transferable Adversarial #Attack for Remote Sensing #Image #Classification ✍️ Xiyu Peng et al. 🔗 brnw.ch/21x1Wme

#KnowledgeDistillation #知识蒸馏/Model #Distillation Beim maschinellen Lernen ist die #Wissensdestillation oder #Modelldestillation der Prozess der Übertragung von Wissen von einem großen Modell auf ein kleineres Modell. yizuo-media.com/yizuo/show/det…

Model distillation & quantization: big model → small model. GPT-4 (1.8T) → GPT-4o (200B). Performance down 5%, cost down 80%. Worth it? Most scenarios: yes. Core scenarios: no. #AI #Distillation #Optimization

From grain to glass: how vodka’s raw materials and distillation shape markets and identity. Read the short explainer: wix.to/lrKOVtP #Vodka #Spirits #Distillation #AgTech

zjdistill.com/products/liquo… Elevate your distillation process with our high-quality liquor stills! Perfect for both small and large-scale producers. Crafted for efficiency and flavor. Explore our range now: #Distillation #CraftSpirits

Turns out you can drop all attention from a 1B‑param model and still hit 14.11 ppl vs 13.86 teacher, just by first distilling into a linearized attention via kernel trick, then into Mamba. No hybrid needed 🤯 #ML #Distillation arxiv.org/abs/2604.14191

#Distillation is the process whereby new models are trained on existing LLMs, it is cheaper & faster - however, devs have discovered that biases remain even when scrubbed from the original model, inherited through “subliminal learning” - by @TheRegister theregister.com/2026/04/15/llm…

Distillation is becoming its own science. This new paper suggests on-policy distillation has enough quirks and mechanisms that copying a stronger model is no longer a simple compression story. Source: arxiv.org/abs/2604.13016 #Distillation #LLMs #AIResearch

Hey, quick reminder 👇 The DigiVac Vapor Pressure Controller (VPC) is built for precise vapor pressure control in distillation & rotovap setups. Better separation. More repeatable runs. Less pump wear. 💨 👉 hubs.li/Q04bVDZG0 #vacuum #distillation #labtech #processcontrol

Whether you’re finishing a metal surface or refining a chemical compound, the diameter of your glass beads changes the outcome. Check out our full range of sizes today! 🔗 bit.ly/SolidGlassBeads #Chemistry #Distillation #LabEquipment #GlassBeads #PropperMfg

McNutt v DOJ: #5thCir affirms: 150-yr-old ban on at-home #distillation of spirits is outside Congress's #taxing power & violates #NecessaryandProperClause; finds all plaintiffs have #standing #alcohol #HobbyDistillers #appellatetwitter #lawtwitter baffc.net/4mkwDqR

What happens if you ask a model like Big Pickle "Hi, what LLM model are you?" 100 times? Well, at some point it might get confused. This smells like distillation a bit too much 😀 #ai #distillation

Grâce à une sélection de d’archives et d’objets emblématiques, l’exposition des #Archives de #Haute-Saône propose une immersion dans l’histoire et les savoir-faire de la #distillation, de la récolte du fruit jusqu’à la fabrication de l’eau-de-vie. archives.haute-saone.fr

What is the best packing for high-pressure distillation? 👉 Metal tower packing (Pall Ring, Structured Packing) offers: ✔ High strength ✔ Heat resistance ✔ Efficient mass transfer Read more: pxdaier.com/tower-packing-… #TowerPacking #Distillation #ChemicalEngineering D-A-I-E-R

🧵 4/6 Eric Schmidt warned: knowledge from giant data centers easily transfers to small devices. DeepSeek proved distillation works. Tiiny made it hardware. What cost billions now costs $455. Superintelligence in your pocket — not sci-fi. August 2026. #Distillation #AIForEveryone

We're excited to share that Iluvatar Labs has joined the NVIDIA Inception program, giving us access to the technical leadership and ecosystem partners to help scale our research from MVP to deployment. #generativeAI #distillation #compression #RLHF #AIsafety #NVIDIAInception

Distilled Circuits: A Mechanistic Study of Internal Restructuring in Knowledge Distillation Reilly Haskins, Benjamin Adams. Action editor: Ehsan Amid. openreview.net/forum?id=S1KJE… #distillation #similarity #distilgpt2

“…observing that #Distillation attacks had increased over the…year [2025].”🧐👇 Joe Khawam ‘The Case for Imposing Costs on China’s #AI #Distillation Campaigns’@just_security (4/4) justsecurity.org/134124/costs-c…

“…systematically harvest model outputs—a process known as knowledge #Distillation. Anthropic revealed that three Chinese #AI laboratories—DeepSeek, Moonshot AI and MiniMax—had used more than 24,000 fraudulent accounts to generate over 16 million exchanges with Claude,…”(2/4)

Something went wrong.

Something went wrong.

United States Trends

- 1. President N/A

- 2. Secret Service N/A

- 3. Good Sunday N/A

- 4. Cole Allen N/A

- 5. White House N/A

- 6. WHCD N/A

- 7. Butler N/A

- 8. Muhammad Qasim N/A

- 9. Staged N/A

- 10. Happy Birthday Melania N/A

- 11. Sunday Funday N/A

- 12. POTUS N/A

- 13. Caltech N/A

- 14. #SeductiveSunday N/A

- 15. Sabastian Sawe N/A

- 16. Tosin N/A

- 17. Dana Bash N/A

- 18. Blessed Sunday N/A

- 19. First Lady N/A

- 20. Senator Fetterman N/A