#activationfunctions Suchergebnisse

Softmax is ideal for output layers, used to assign probabilities to inputs summing to 100%, not meant for training models. #softmax #activationfunctions #machinelearning

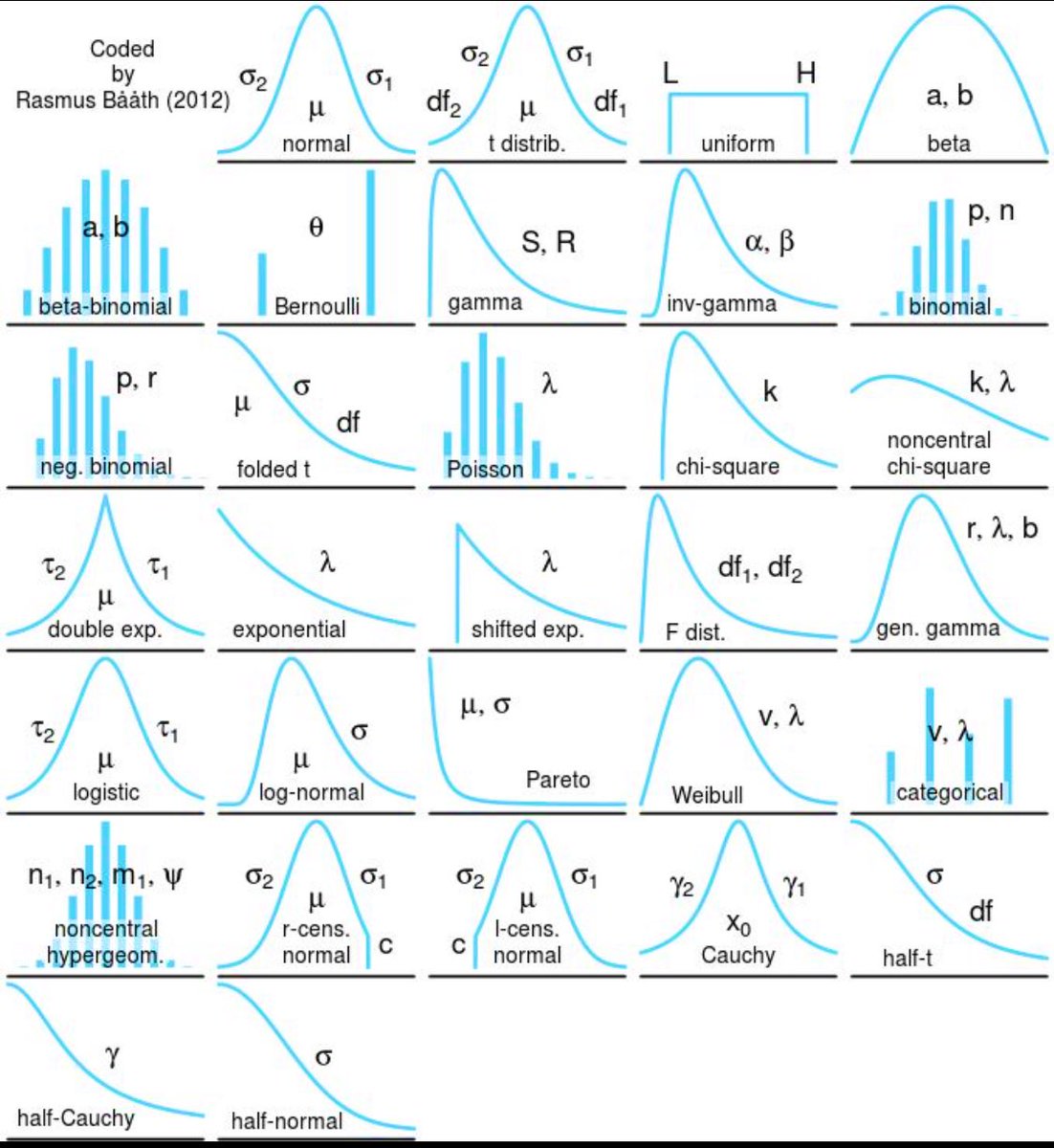

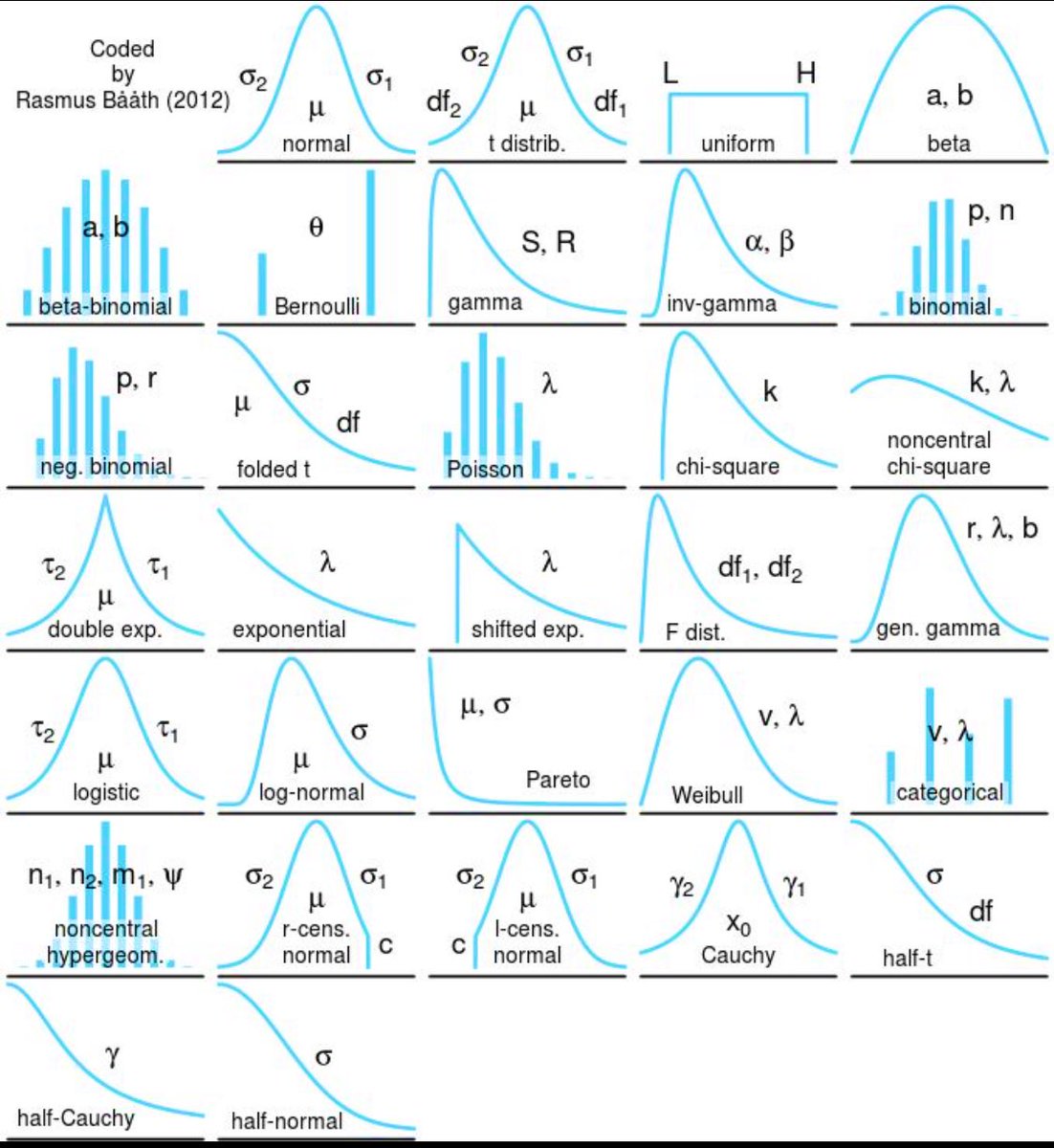

Know your distributions. Normal ain’t the only one. #ActivationFunctions #ProbabilityDistribution #WeekendStudies

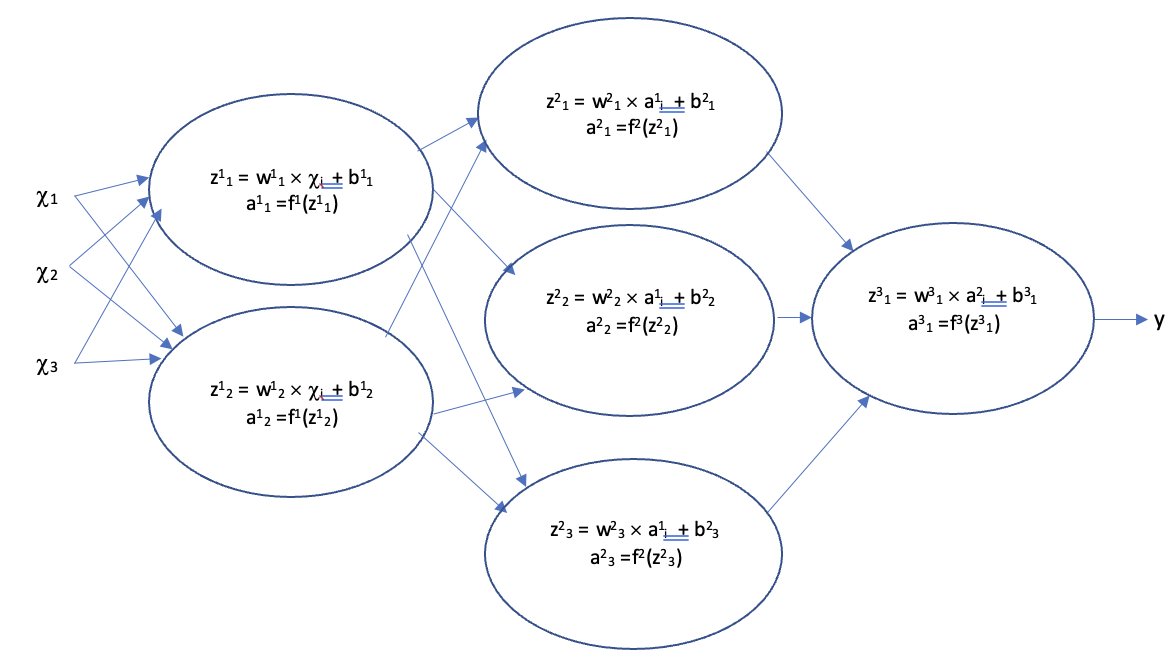

Activation functions in machine learning manage output range and improve model accuracy by preventing neuron activation issues. #activationfunctions #machinelearning #relu

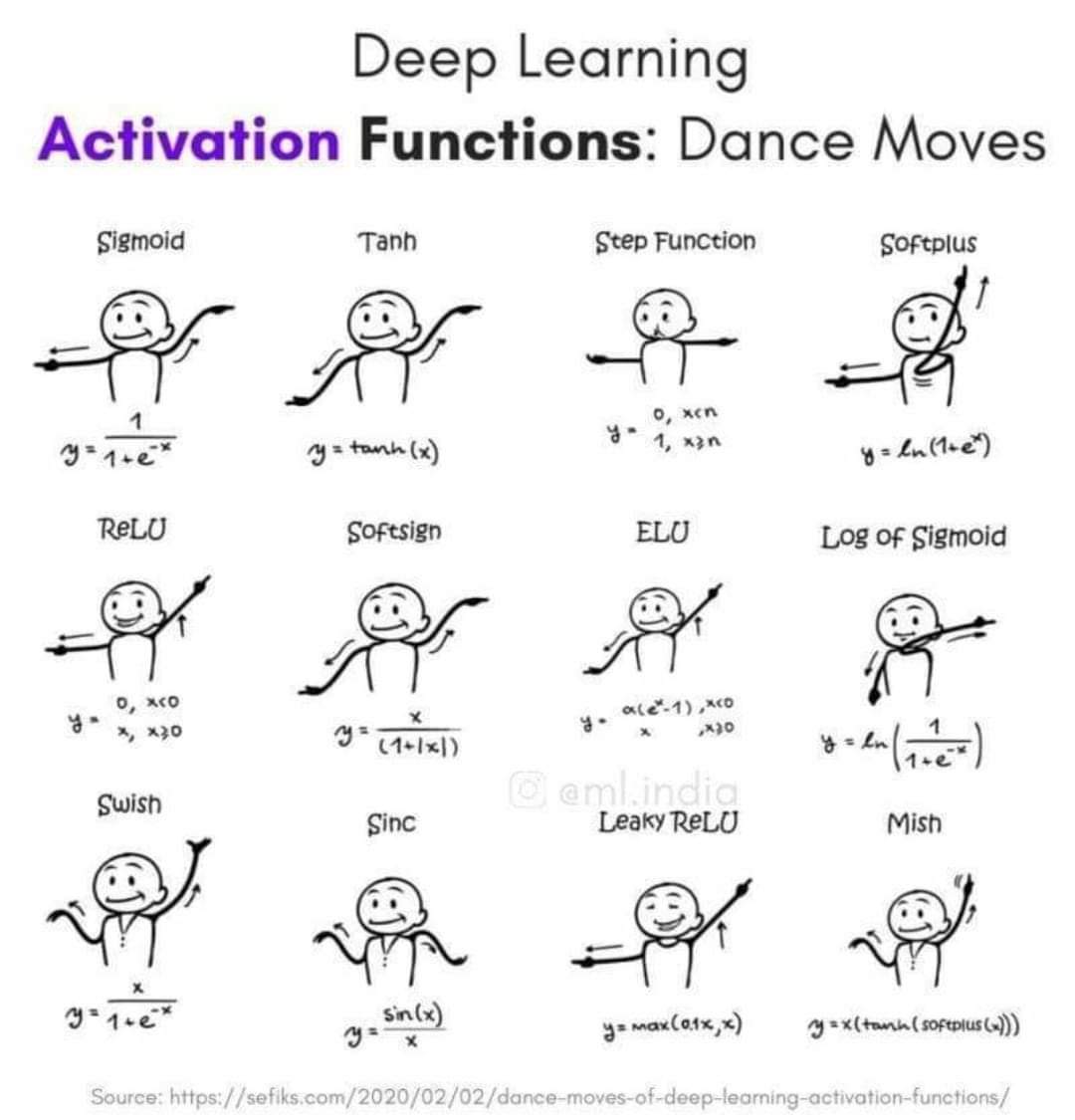

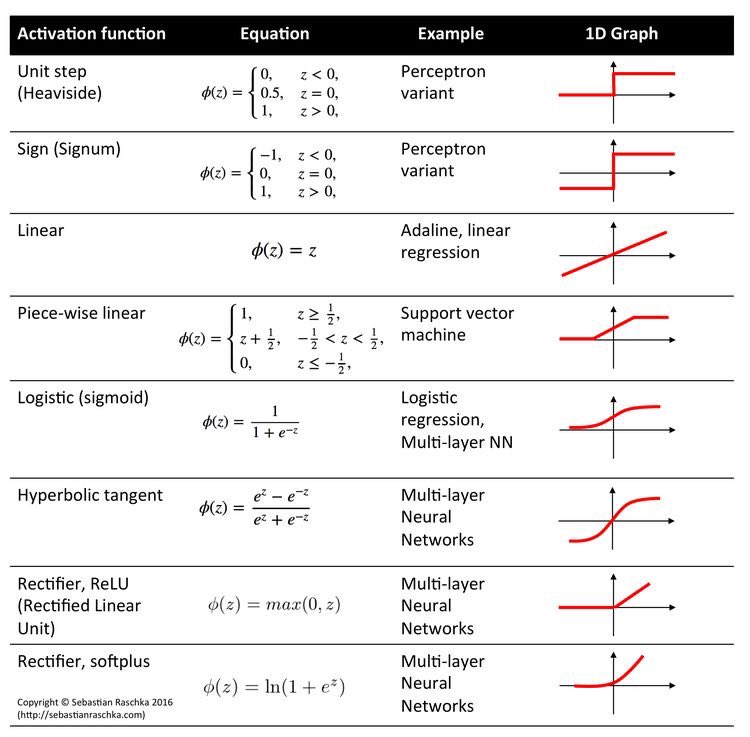

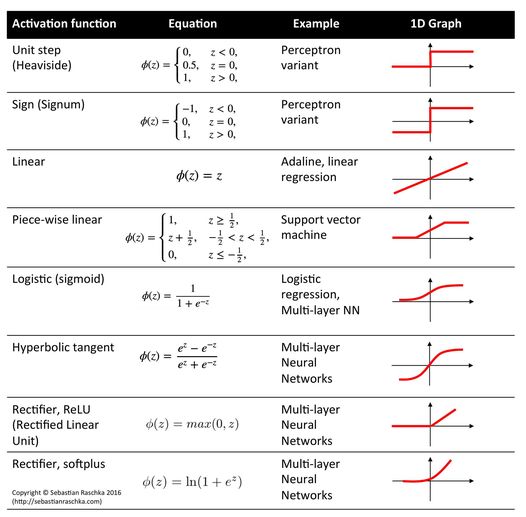

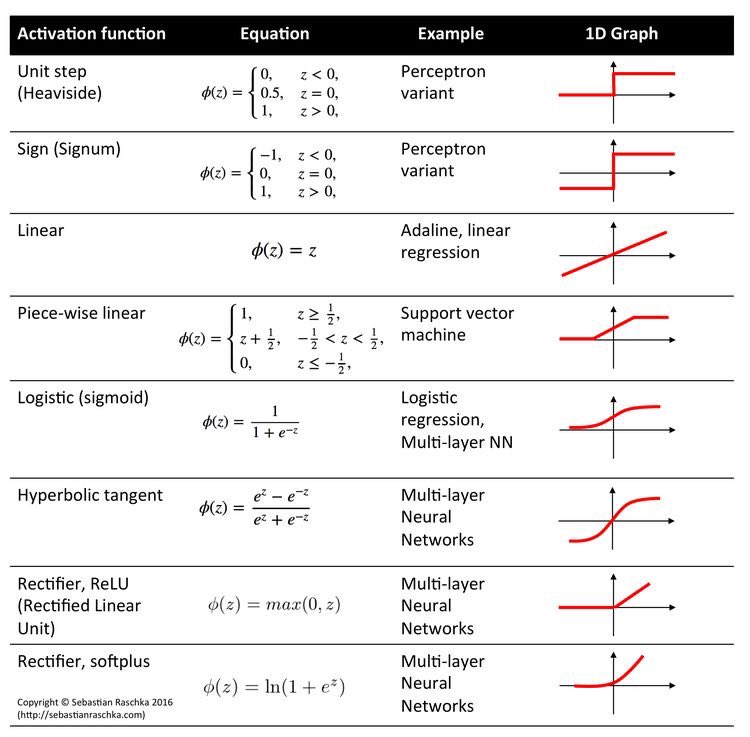

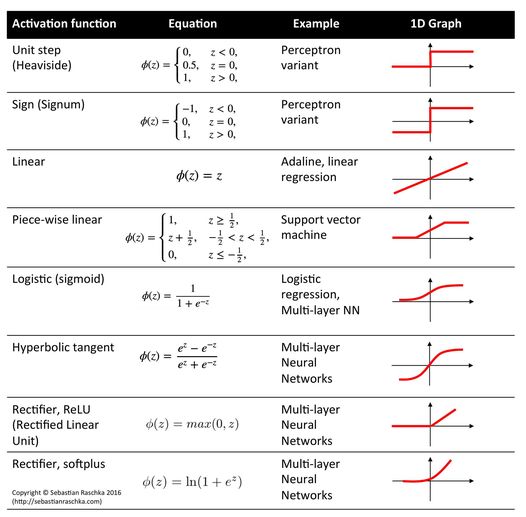

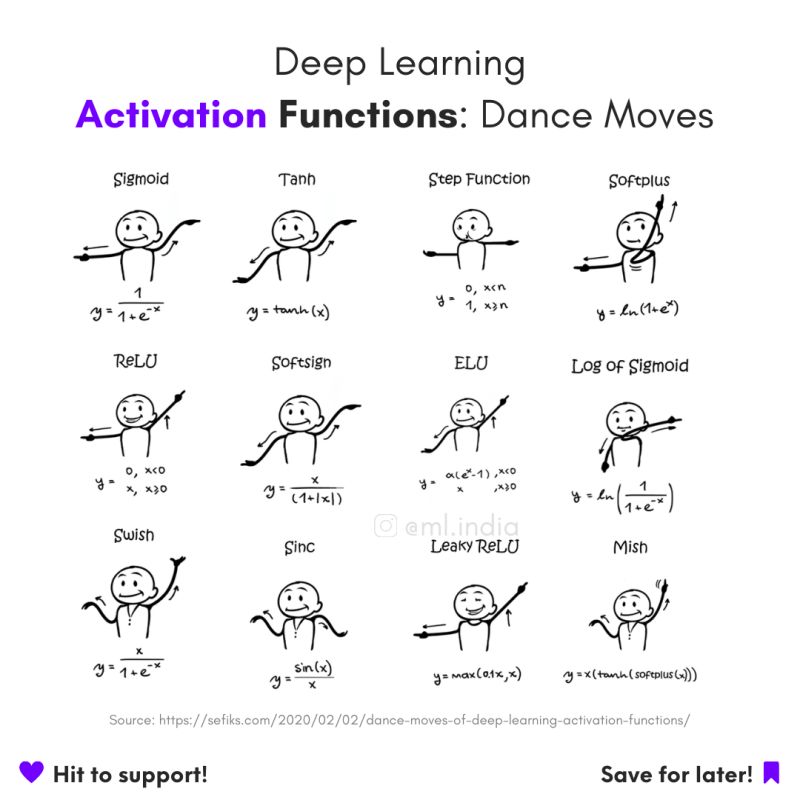

Looking for the right type of Activation Function for your Neural Network Model? Here's a list describing each and everyone. Don't forget to look at the last image. 🧵👇 #ActivationFunctions #deeplearning #python #codanics #neuralnetworks #machinelearning

ReLU emerges as the fastest activation function after optimization in benchmarks. #machinelearning #activationfunctions #neuralnetworks

Activation functions like sigmoid or tanh guide machine learning models, speeding learning, minimizing errors, and preventing dead neurons. #sigmoid #activationfunctions #machinelearning

RT aammar_tufail RT @arslanchaos: Looking for the right type of Activation Function for your Neural Network Model? Here's a list describing each and everyone. Don't forget to look at the last image. 🧵👇 #ActivationFunctions #deeplearning #python #cod…

Neural Network Architectures and Activation Functions: A Gaussian Process Approach - freecomputerbooks.com/Neural-Network… Look for "Read and Download Links" section to download. #NeuralNetworks #ActivationFunctions #GaussianProcess #DeepLearning #MachineLearning #GenAI #GenerativeAI

Activation functions #NeuralNetwork #DeepLearning #ActivationFunctions

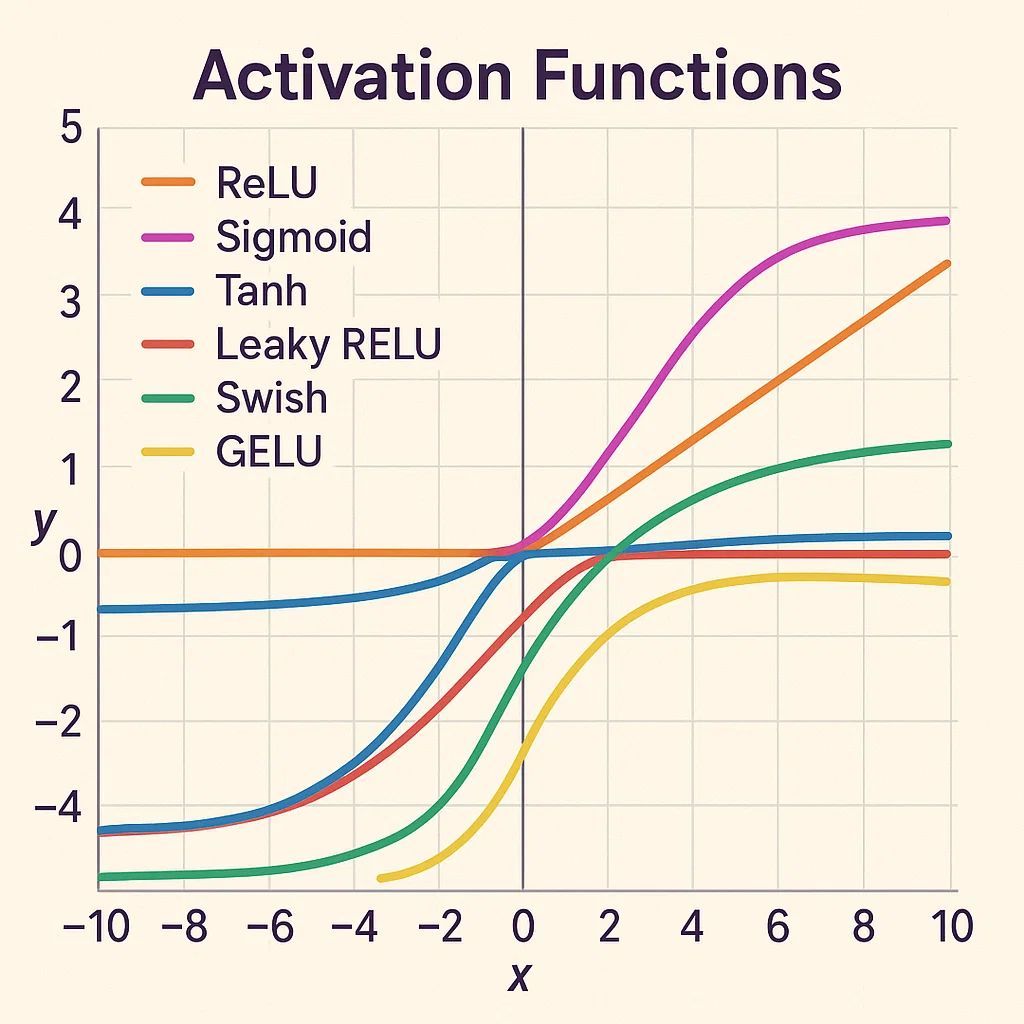

Activation functions in deep networks aren't just mathematical curiosities—they're the decision makers that determine how information flows. ReLU, sigmoid, and tanh each shape learning differently, influencing AI behavior and performance. #DeepNeuralNetworks #ActivationFunctions…

🧠#ActivationFunctions in #DeepLearning! They introduce non-linearity, enabling neural networks to learn complex patterns. Key types: Sigmoid, Tanh, ReLU, Softmax. Essential for enhancing model complexity & stability. 🚀 #AI #MachineLearning #DataScience

RT The Importance and Reasoning behind Activation Functions dlvr.it/SCZlJ8 #activationfunctions #neuralnetworks #machinelearning #datascience

💥 An overview of activation functions for Neural Networks! Source: @BDAnalyticsnews #NeuralNetwork #ActivationFunctions

RT Understanding ReLU: The Most Popular Activation Function in 5 Minutes! dlvr.it/RlBysm #relu #activationfunctions #artificialintelligence #machinelearning

"Activation functions: the secret of neural networks! They determine outputs based on inputs & weights. Quantum neural networks take it even further by implementing any activation function without measuring outputs. Truly mind-blowing! #ActivationFunctions #QuantumNeuralNetwor

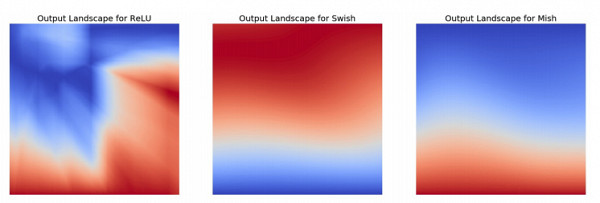

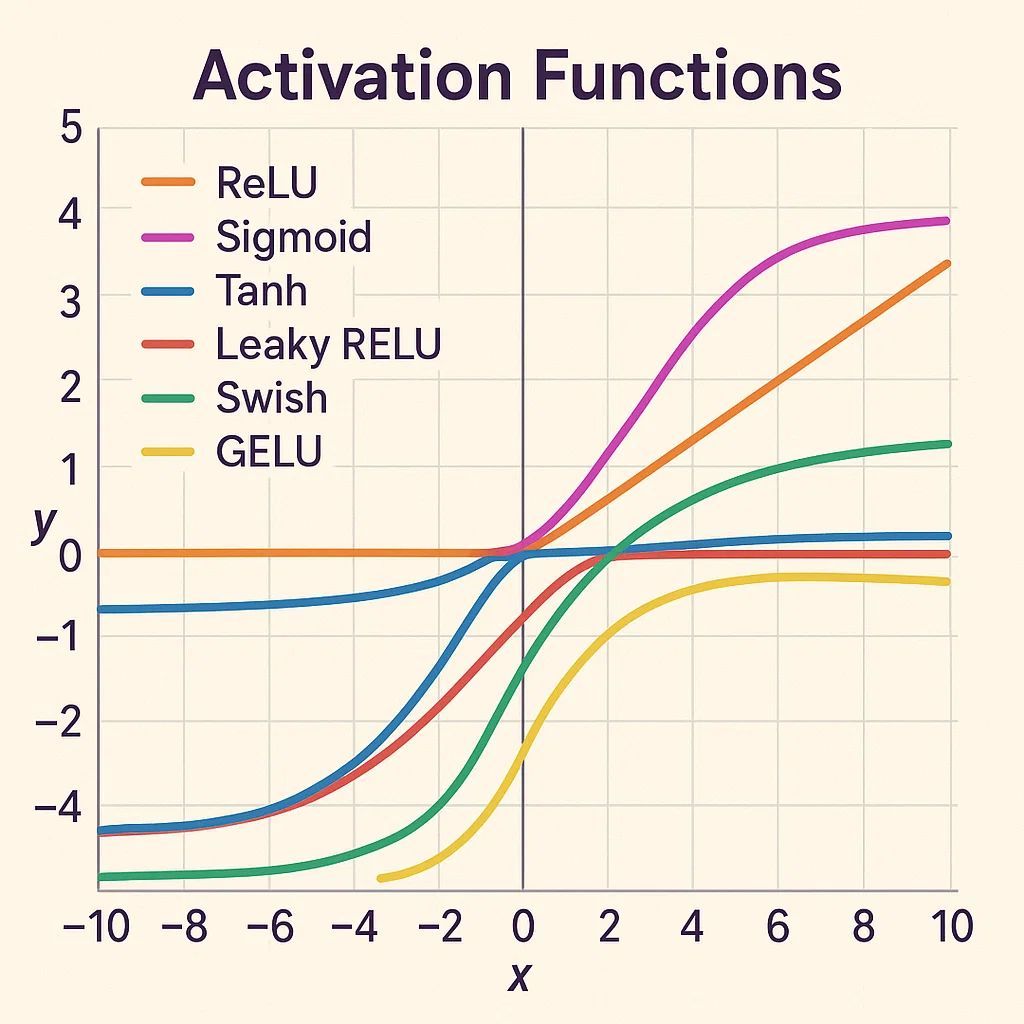

RT On the Disparity Between Swish and GELU dlvr.it/RtvGNy #activationfunctions #neuralnetworks #artificialintelligence

Manual Of Activations in Deep Learning dlvr.it/Rl3PQC #machinelearning #activationfunctions #datascience

RT Using Activation Functions in Neural Nets dlvr.it/S0PBTs #datascience #algorithms #activationfunctions #neuralnetworks

Activation functions in deep networks aren't just mathematical curiosities—they're the decision makers that determine how information flows. ReLU, sigmoid, and tanh each shape learning differently, influencing AI behavior and performance. #DeepNeuralNetworks #ActivationFunctions…

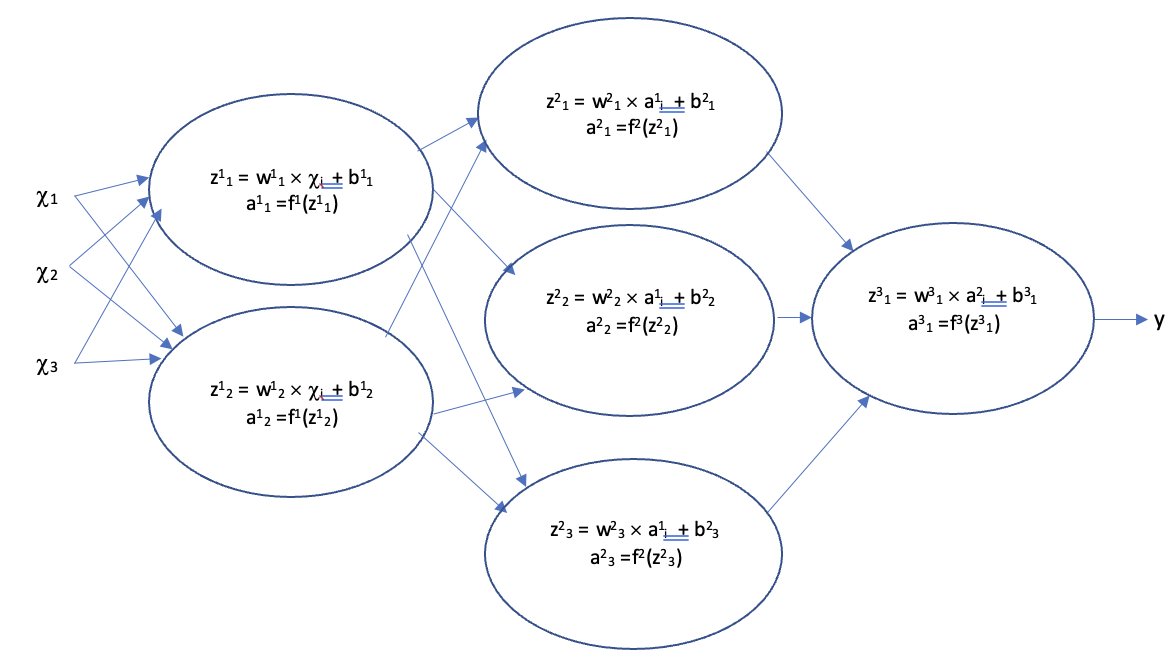

Neuron Aggregation & Activation Functions – In ANNs, aggregation combines weighted inputs, while activation functions introduce non-linearity letting networks learn complex patterns instead of staying linear. #DeepLearning #MachineLearning #ActivationFunctions #AI #NeuralNetworks

🧠 Let’s activate some neural magic! ReLU, Sigmoid, Tanh & Softmax each shape how your network learns & predicts. From binary to multi-class—choose wisely to supercharge your model! ⚡ 🔗buff.ly/Cx76v5Y & buff.ly/5PzZctS #AI365 #ActivationFunctions #MLBasics

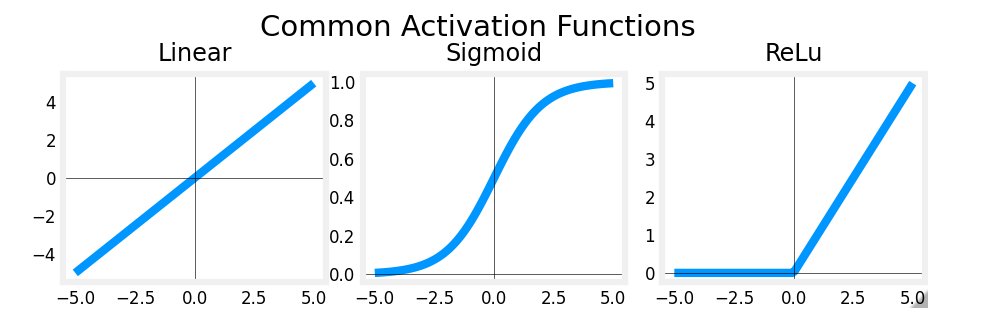

Day 28 🧠 Activation Functions, from scratch → ReLU: max(0, x) — simple & fast → Sigmoid: squashes to (0,1), good for probs → Tanh: like sigmoid but centered at 0 #MLfromScratch #DeepLearning #ActivationFunctions #100DaysOfML

🚀 Explore the Activation Function Atlas — your visual & mathematical map through the nonlinear heart of deep learning. From ReLU to GELU, discover how activations shape AI intelligence. 🧠📈 🔗 programming-ocean.com/knowledge-hub/… #AI #DeepLearning #ActivationFunctions #MachineLearning

🚀 Activation Functions: The Secret Sauce of Neural Networks! They add non-linearity, helping models grasp complex patterns! 🧠 🔹 ReLU🔹Sigmoid🔹Tanh Power up your AI models with the right activation functions! Follow #AI365 👉 shorturl.at/1Ek3f #ActivationFunctions 💡🔥

Neural Networks: Explained for Everyone Neural Networks Explained The building blocks of artificial intelligence, powering modern machine learning applications ... justoborn.com/neural-network… #activationfunctions #aiapplications #AIEthics

Builder Perspective - #AttentionMechanisms: Multi-head attention patterns - Layer Configuration: Depth vs. width tradeoffs - Normalization Strategies: Pre-norm vs. post-norm - #ActivationFunctions: Selection and placement

Neural Network Architectures and Activation Functions: A Gaussian Process Approach - freecomputerbooks.com/Neural-Network… Look for "Read and Download Links" section to download. #NeuralNetworks #ActivationFunctions #GaussianProcess #DeepLearning #MachineLearning #GenAI #GenerativeAI

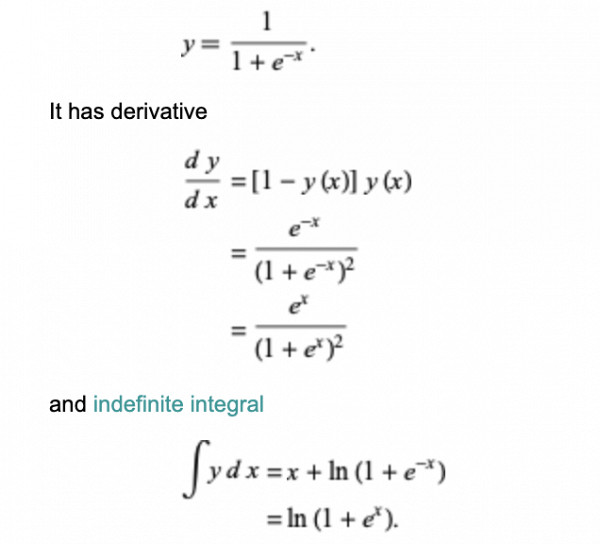

4️⃣ Sigmoid’s Secret 🤫 Why do we use sigmoid activation? It adds non-linearity, letting the network learn complex patterns! 📈 sigmoid = @(z) 1 ./ (1 + exp(-z)); Without it, the network is just fancy linear regression! 😱 #ActivationFunctions #DeepLearning

I just published a blog about Asymmetric Tanh Pi 4 for Deep Neural Nets link.medium.com/rINE3VxixQb and corresponding github.com/Mastermindless… #DeepLearning #ArtificialIntelligence #ActivationFunctions #MachineLearning #NeuralNetworks #ResNet #CustomTanh #AIResearch #GradientFlow

Building a ReLU Activation Function from Scratch in Python youtube.com/watch?v=Qovt6U… #stem #neuralnetworks #activationfunctions #machinelearning #pythonprogramming #datascience

youtube.com

YouTube

Building a ReLU Activation Function from Scratch in Python

Unlock your model's potential by selecting the ideal activation function! Enhance performance and accuracy with the right choice. #MachineLearning #AI #ActivationFunctions #DeepLearning #DataScience

🧠 In Machine Learning, we often talk about activation functions like ReLU, Sigmoid, or Tanh. But what truly powers learning behind the scenes? Read more: insightai.global/derivative-of-… #AI #ML #ActivationFunctions #Relu #Sigmoid #Tanh

💥 An overview of activation functions for Neural Networks! Source: @BDAnalyticsnews #NeuralNetwork #ActivationFunctions

RT aammar_tufail RT @arslanchaos: Looking for the right type of Activation Function for your Neural Network Model? Here's a list describing each and everyone. Don't forget to look at the last image. 🧵👇 #ActivationFunctions #deeplearning #python #cod…

Graphical Representation of #ActivationFunctions #AI #MachineLearning #ANN #CNN #ArtificialIntelligence #ML #DataScience #Data #Database #Python #programming #DeepLearning #DataAnalytics #DataScientist #DATA #coding #newbies #100daysofcoding

RT The Importance and Reasoning behind Activation Functions dlvr.it/SCZlJ8 #activationfunctions #neuralnetworks #machinelearning #datascience

10 Activation Functions Every Data Scientist Should Know About - websystemer.no/10-activation-… #activationfunctions #artificialintelligence #deeplearning #machinelearning #statistics

RT Understanding ReLU: The Most Popular Activation Function in 5 Minutes! dlvr.it/RlBysm #relu #activationfunctions #artificialintelligence #machinelearning

Neural Network Architectures and Activation Functions: A Gaussian Process Approach - freecomputerbooks.com/Neural-Network… Look for "Read and Download Links" section to download. #NeuralNetworks #ActivationFunctions #GaussianProcess #DeepLearning #MachineLearning #GenAI #GenerativeAI

Know your distributions. Normal ain’t the only one. #ActivationFunctions #ProbabilityDistribution #WeekendStudies

🧠📊 Three most commonly used Activation Functions in Neural Networks: Linear, Sigmoid, ReLU. 🚀 Understand how these functions shape AI learning! Follow on LinkedIN: Amit Subhash Chejara 💡💻 #NeuralNetworks #ActivationFunctions #AI

🧠#ActivationFunctions in #DeepLearning! They introduce non-linearity, enabling neural networks to learn complex patterns. Key types: Sigmoid, Tanh, ReLU, Softmax. Essential for enhancing model complexity & stability. 🚀 #AI #MachineLearning #DataScience

ReLU (Rectified Linear Unit) - It's a simple yet powerful activation function that: ⚡ Introduces non-linearity, enabling complex pattern learning. ⚡ Speeds up training with efficient computation. #deeplearning #activationfunctions #neuralnetworks

What is the Sigmoid Function? How it is implemented in Logistic Regression? - websystemer.no/what-is-the-si… #activationfunctions #datascience #logisticregression #machinelearning #sigmoid

Mish Activation Function In YOLOv4 - websystemer.no/mish-activatio… #activationfunctions #bagofspecials #machinelearning #mishactivation #yolov4

Unlock your model's potential by selecting the ideal activation function! Enhance performance and accuracy with the right choice. #MachineLearning #AI #ActivationFunctions #DeepLearning #DataScience

🚀 Activation Functions: The Secret Sauce of Neural Networks! They add non-linearity, helping models grasp complex patterns! 🧠 🔹 ReLU🔹Sigmoid🔹Tanh Power up your AI models with the right activation functions! Follow #AI365 👉 shorturl.at/1Ek3f #ActivationFunctions 💡🔥

Manual Of Activations in Deep Learning - websystemer.no/manual-of-acti… #activationfunctions #datascience #machinelearning

Something went wrong.

Something went wrong.

United States Trends

- 1. yeonjun 254K posts

- 2. #CARTMANCOIN 1,939 posts

- 3. Broncos 67.5K posts

- 4. Raiders 66.6K posts

- 5. $APDN $0.20 Applied DNA N/A

- 6. Bo Nix 18.5K posts

- 7. Geno 19.1K posts

- 8. $LMT $450.50 Lockheed F-35 N/A

- 9. $SENS $0.70 Senseonics CGM N/A

- 10. #iQIYIiJOYTH2026 1.05M posts

- 11. daniela 53.8K posts

- 12. Sean Payton 4,862 posts

- 13. #criticalrolespoilers 5,172 posts

- 14. Kehlani 11.1K posts

- 15. Danny Brown 3,244 posts

- 16. #TNFonPrime 4,080 posts

- 17. #PowerForce 1,048 posts

- 18. Kenny Pickett 1,523 posts

- 19. Chip Kelly 2,020 posts

- 20. MIND-BLOWING 21.9K posts